Deep in a mine in Greater Sudbury, Ontario, Canada, you will find the deepest flush toilets in the world. Four of them, actually, ensuring the comfort of the staff and users of SNOLAB, an underground clean lab with very low levels of background radiation that specialises in neutrino and dark-matter physics.

Toilets might not be the first thing that comes to mind when discussing a particle-physics laboratory, but they are one of numerous logistical considerations when hosting 60 people per day at a depth of 2 km for 10 hours at a time. SNOLAB is the world’s deepest cleanroom facility, a class-2000 cleanroom (see panel below) the size of a shopping mall situated in the operational Vale Creighton nickel mine. It is an expansion of the facility that hosted the Sudbury Neutrino Observatory (SNO), a large, heavy-water detector designed to detect neutrinos from the Sun. In 2001, SNO contributed to the discovery of neutrino oscillations, leading to the joint award of the 2015 Nobel Prize in Physics to SNO spokesperson Arthur B McDonald and Super-Kamiokande spokesperson Takaaki Kajita.

Initially, there were no plans to maintain the infrastructure beyond the timeline of SNO, which was just one experiment and not a designated research facility. However, following the success of the SNO experiment, there was increased interest in low-background detectors for neutrino and dark-matter studies.

Building on SNO’s success

The SNO collaboration was first formed in 1984, with the goal of solving the solar neutrino problem. This problem surfaced during the 1960s, when the Homestake experiment in the Homestake Mine at Lead, South Dakota, began looking for neutrinos created in the early stages of solar fusion. This experiment and its successors, using different target materials and technologies, consistently observed only 30–50% of the neutrinos predicted by the standard solar model. A seemingly small nuisance posed a large problem, which required a large-scale solution.

SNO used a 12 m-diameter spherical vessel containing 1000 tonnes of heavy water to count solar neutrino interactions. Canada had vast reserves of heavy water for use in its nuclear reactors, making it an ideal location for such a detector. The experiment also required an extreme level of cleanliness, so that the signals physicists were searching for would not be confused with background events coming from dust, for instance. The SNO collaboration also had to develop new techniques to measure the inherent radioactivity of their detector materials and the heavy water itself.

Using heavy water gave SNO the ability to observe three different neutrino reactions: one reaction could only happen with electron neutrinos; one was sensitive to all neutrino flavours (electron, muon and tau); and the third provided the directionality pointing back to the Sun. These three complementary interactions let the team test the hypothesis that solar neutrinos were changing flavour as they travelled to Earth. In contrast to previous experiments, this approach allowed SNO to make a measurement of the parameters describing neutrino oscillations that didn’t depend on solar models. SNO’s data confirmed what previous experiments had seen and also verified the predictions of theories, implying that neutrinos do indeed oscillate during their Sun–Earth journey. The experiment ran for seven years and produced 178 papers accumulating more than 275 authors.

In 2002, the Canadian community secured funding to create an extended underground laboratory with SNO as the starting point. Construction of SNOLAB’s underground facility was completed in 2009 and two years later the last experimental hall entered “cleanroom” operation. Some 30 letters of interest were received from different collaborations proposing potential experiments, helping to define the requirements of the new lab.

SNOLAB’s construction was made possible by capital funds totalling CAD$73 million, with more than half coming from the Canada Foundation for Innovation through the International Joint Venture programme. Instead of a single giant cavern, local company Redpath Mining excavated several small and two large halls to hold experiments. The smaller halls helped the engineers manage the enormous stress placed on the rock in larger underground cavities. Bolts 10 m long stabilise the rock in the ceilings of the remaining large caverns, and throughout the lab the rock is covered with a 10 cm-thick layer of spray-on concrete for further stability, with an additional hand-troweled layer to help keep the walls dust-free. This latter task was carried out by Béton Projeté MAH, the same company that finished the bobsled track in the 2010 Vancouver Winter Olympics.

In addition to the experimental halls, SNOLAB is equipped with a chemistry laboratory, a machine shop, storage areas, and a lunchroom. Since the SNO experiment was still running when new tunnels and caverns were excavated, the connection between the new space and the original clean lab area was completed late in the project. The dark-matter experiments DEAP-1 and PICASSO were also already running in the SNO areas before construction of SNOLAB was completed.

Dark matter, neutrinos, and more

Today, SNOLAB employs a staff of over 100 people, working on engineering design, construction, installation, technical support and operations. In addition to providing expert and local support to the experiments, SNOLAB research scientists undertake research in their own right as members of the collaborations.

With so much additional space, SNOLAB’s physics programme has expanded greatly during the past seven years. SNO has evolved into SNO+, in which a liquid scintillator replaces the heavy water to increase the detector’s sensitivity. The scintillator will be doped with tellurium, making SNO+ sensitive to the hypothetical process of neutrinoless double-beta decay. Two of tellurium’s natural isotopes (128Te and 130Te) are known to undergo conventional double-beta decay, making them good candidates to search for the long-sought neutrinoless version. Detecting this decay would violate lepton-number conservation, proving that the neutrino is its own antiparticle (a Majorana particle). SNO+ is one of several experiments currently hunting this process down.

Another active SNOLAB experiment is the Helium and Lead Observatory (HALO), which uses 76 tons of lead blocks instrumented with 128 helium-3 neutron detectors to capture the intense neutrino flux generated when the core of a star collapses at the early stages of a supernova. Together with similar detectors around the world, HALO is part of a supernova early-warning system, which allows astronomers to orient their instruments to observe the phenomenon before it is visible in the sky.

With no fewer than six active projects, dark-matter searches comprise a large fraction of SNOLAB’s physics programme. Many different technologies are employed to search for the dark-matter candidate of choice: the weakly interacting massive particle (WIMP). The PICASSO and COUPP collaborations were both using bubble chambers to search for WIMPS, and merged into the very successful PICO project. Through successive improvements, PICO has endeavoured to enhance the sensitivity to WIMP spin-dependent interactions by an order of magnitude every couple of years. Its sensitivity is best for WIMP masses around 20 GeV/c2. Currently the PICO collaboration is developing a much larger version with up to 500 litres of active-mass material.

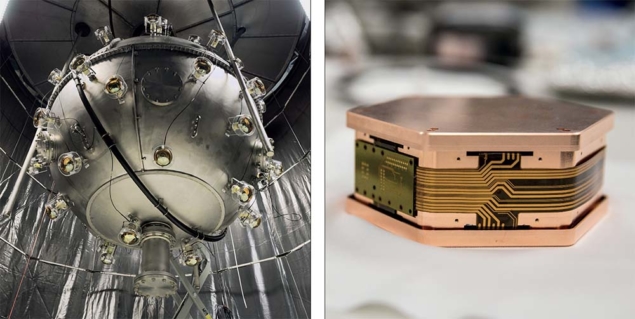

DEAP-3600, successor to DEAP-1, is one of the biggest dark-matter detectors ever built, and it has been taking data for almost two years now. It seeks to detect spin-independent interactions between WIMPs and 3300 kg of liquid argon contained in a 1.7 m-diameter acrylic vessel. The best sensitivity will be achieved for a WIMP mass of 100 GeV/c2. Using a different technology, the DAMIC (Dark Matter In CCDs) experiment employs CCD sensors, which have low intrinsic noise levels, and is sensitive to WIMP masses as low as 1 GeV/c2.

Although the science at SNOLAB primarily focuses on neutrinos and dark matter, the low-background underground environment is also useful for biology experiments. REPAIR explores how low radiation levels affect cell development and repair from DNA damage. One hypothesis is that removing background radiation may be detrimental to living systems. REPAIR can help determine whether this hypothesis is correct and characterise any negative impacts. Another experiment, FLAME, studies the effect of prolonged time spent underground on living organisms using fruit flies as a model. The findings from this research could be used by mining companies to support

a healthier workforce.

Future research

There are many exciting new experiments under construction at SNOLAB, including several dark-matter experiments. While the PICO experiment is increasing its detector mass, other experiments are using several different technologies to cover a wide range of possible WIMP masses. The SuperCDMS experiment and CUTE test facility use solid-state silicon and germanium detectors kept at temperatures near absolute zero to search for dark matter, while the NEWS-G experiment will use gasses such as hydrogen, helium and neon in a 1.4 m-diameter copper sphere.

SNOLAB still has space available for additional experiments requiring a deep underground cleanroom environment. The Cryopit, the largest remaining cavern, will be used for a next-generation double-beta-decay experiment. Additional spaces outside the large experimental halls can host several small-scale experiments. While the results of today’s experiments will influence future detectors and detector technologies, the astroparticle physics community will continue to demand clean underground facilities to host the world’s most sensitive detectors. From an underground cavern carved out to host a novel neutrino detector to the deepest cleanroom facility in the world, SNOLAB will continue to seek out and host world-class physics experiments to unravel some of the universe’s deepest mysteries.