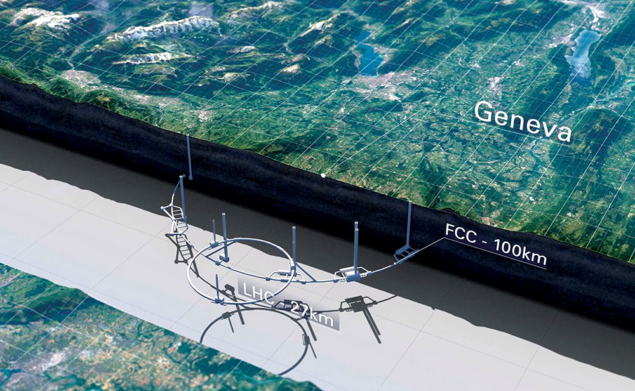

The discovery of the Higgs boson at the LHC in the summer of 2012 set particle physics on a new course of exploration. While the LHC experiments have determined many of the properties of the Higgs boson with a precision beyond expectations, and will continue to do so until the mid-2030s, physicists have long planned a successor to the LHC that can further explore the Higgs mechanism and other potential sources of new physics. Several proposals are on the table, the most ambitious and scientifically far-reaching involving circular colliders with a circumference of around 100 km.

On 18 December, the Future Circular Collider (FCC) study released its conceptual design report (CDR) for a 100 km collider based at CERN. A month earlier, the Institute of High Energy Physics (IHEP) in China officially presented a CDR for a similar project called the Circular Electron Positron Collider (CEPC). Both studies were launched shortly after the discovery of the Higgs boson (the FCC was a direct response to a request from the 2013 update of the European Strategy for Particle Physics to prepare a post-LHC machine, following preliminary proposals for a circular Higgs factory at CERN in 2011), and both envisage physics programmes extending deep into the 21st century. Documents concerning the FCC and CEPC proposals were also submitted as input to the latest update of the European Strategy for Particle Physics at the end of the year (see Input received for European strategy update).

“If a high-luminosity electron–positron Higgs factory were to drop out of the sky tomorrow, the line of users would be very long, while a very-high-energy hadron collider is a vessel of discovery and will help us study the role of the Higgs boson in taming the high-energy behaviour of longitudinal gauge-boson (WW) scattering,” says theorist Chris Quigg of Fermilab in the US. “It is a very significant validation of the scientific promise opened by a 100 km ring for scientists of different regions to express the same judgment.”

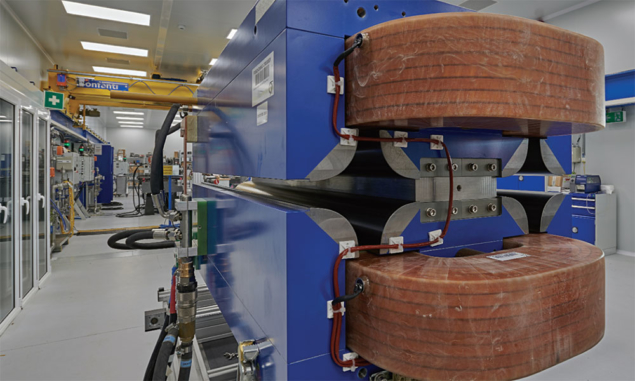

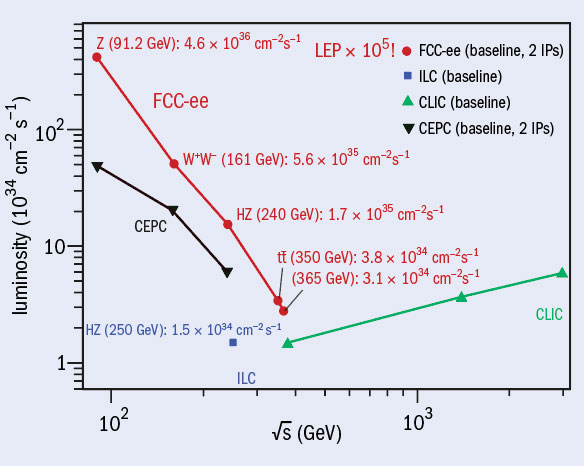

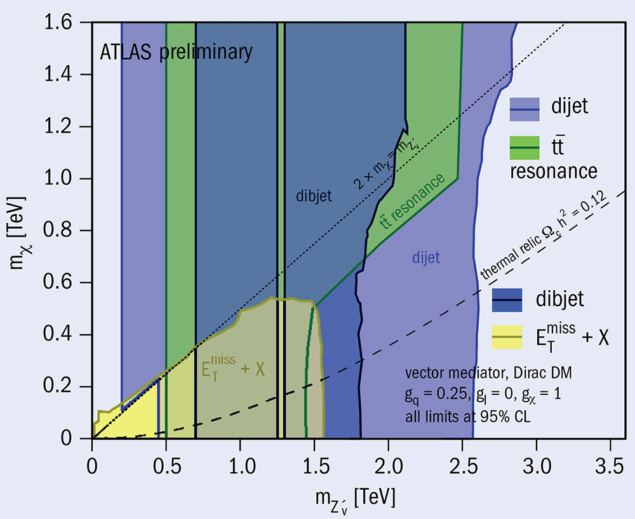

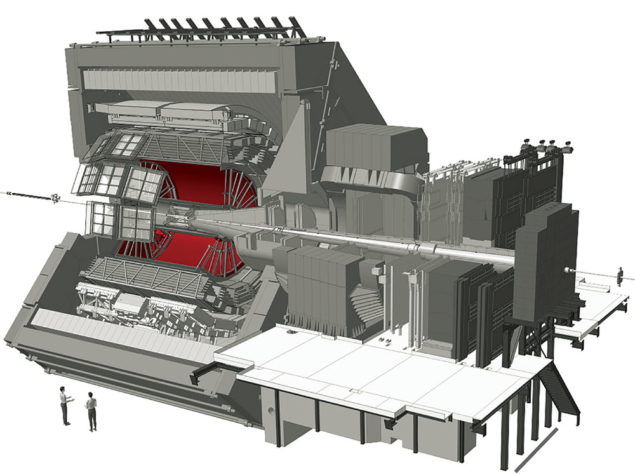

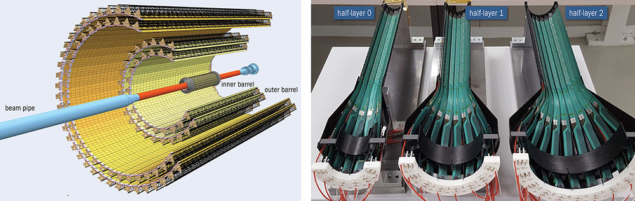

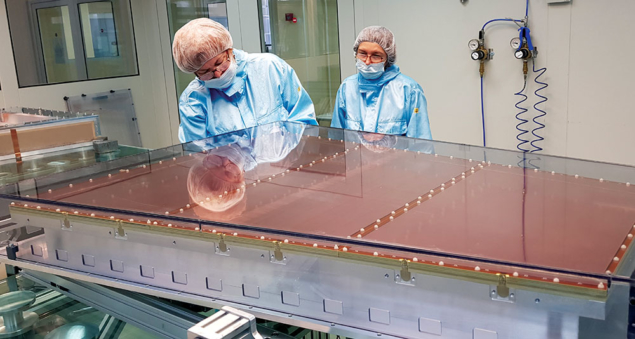

The four-volume FCC report demonstrates the project’s technical feasibility and identifies the physics opportunities offered by the different collider options: a high-luminosity electron–positron collider (FCC-ee) with a centre-of-mass energy ranging from the Z pole (90 GeV) to the tt̅ threshold (365 GeV); a 100 TeV proton–proton collider (FCC-hh); a future lepton–proton collider (FCC-eh); and, finally, a higher-energy hadron collider in the existing tunnel (HE-LHC). The FCC is a global collaboration of more than 140 universities, research institutes and industrial partners. During the past five years, with the support of the European Commission’s Horizon 2020 programme, the FCC collaboration has made significant advances in high-field superconducting magnets, high-efficiency radio-frequency cavities, vacuum systems, large-scale cryogenic refrigeration and other enabling technologies (see A giant leap for physics).

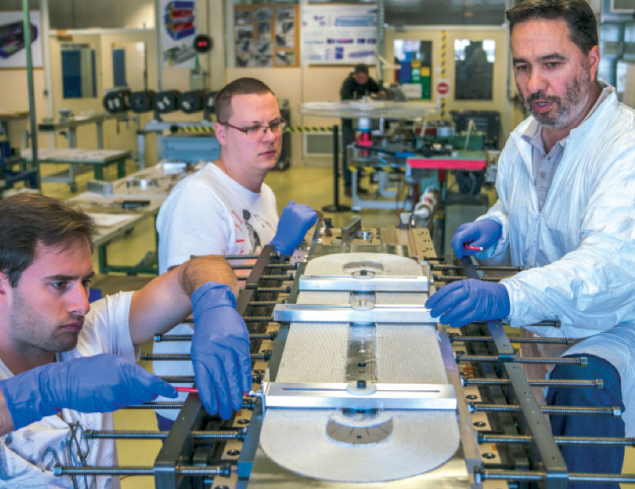

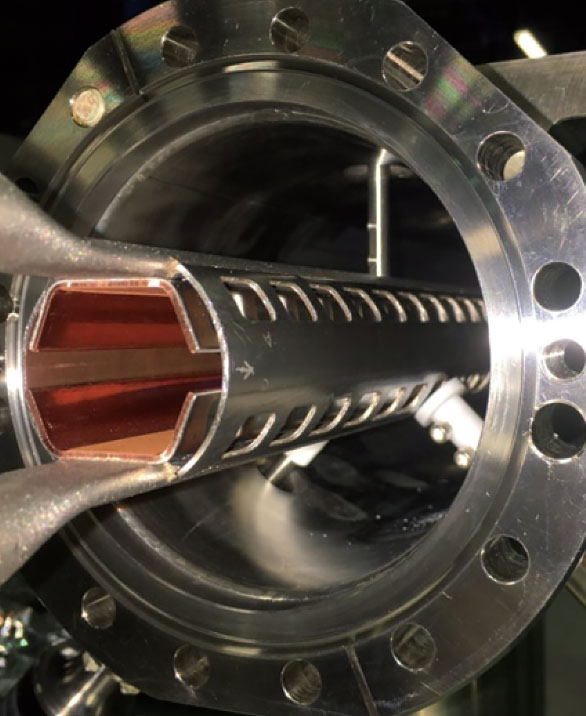

The four-volume FCC report demonstrates the project’s technical feasibility and identifies the physics opportunities offered by the different collider options: a high-luminosity electron–positron collider (FCC-ee) with a centre-of-mass energy ranging from the Z pole (90 GeV) to the tt̅ threshold (365 GeV); a 100 TeV proton–proton collider (FCC-hh); a future lepton–proton collider (FCC-eh); and, finally, a higher-energy hadron collider in the existing tunnel (HE-LHC). The FCC is a global collaboration of more than 140 universities, research institutes and industrial partners. During the past five years, with the support of the European Commission’s Horizon 2020 programme, the FCC collaboration has made significant advances in high-field superconducting magnets, high-efficiency radio-frequency cavities, vacuum systems, large-scale cryogenic refrigeration and other enabling technologies (see A giant leap for physics).

According to the present proposal, an eight-year period for project preparation and administration is required before construction of FCC’s underground areas can begin, potentially allowing the FCC-ee physics programme to start by 2039. The FCC-hh, installed in the same tunnel, could then start operations in the late 2050s. “Though the two machines can be built independently, a combined scenario profits from the extensive reuse of civil engineering and technical systems, and also from the additional time available for high-field magnet breakthroughs,” says deputy leader of the FCC study Frank Zimmermann of CERN. “Timely preparation, early investment and diverse collaborations between researchers and industry are already yielding promising results and confirming the anticipated downward trend in the costs associated with operation.”

Asian ambition

CEPC is a putative 240 GeV circular electron-positron collider, the tunnel for which is foreseen to one day host a super proton–proton collider (SppC) that reaches energies beyond the LHC (CERN Courier June 2018 p21). The two-volume CEPC design report summarises the work accomplished in the past few years by thousands of scientists and engineers in China and abroad. IHEP states that construction of CEPC will begin as soon as 2022 – allowing time to build prototypes of key technical components and establish support for manufacturing – and be completed by 2030. According to the tentative operational plan, CEPC will run for seven years as a Higgs factory, followed by two years as a Z factory and one year at the WW threshold, potentially followed by the installation of the SppC. Although CEPC–SppC is a Chinese-proposed project to be built in China, it has an international advisory committee and more than 20 agreements have been signed with institutes and universities around the world.

“The Beijing Electron Positron Collider will stop running in the 2020s, and China’s government is encouraging Chinese scientists to initiate and work towards large international science projects, so it is possible that CEPC may get a green light soon,” explains deputy leader of the CEPC project Jie Gao of IHEP. “As for the site, many Chinese local governments showed strong interest to host CEPC with the support of the central government.”

Cost is a key factor for both the Chinese and European projects, with the tunnel taking up a large fraction of the expense. CEPC’s price tag is currently $5 billion and FCC-ee is hovering at around twice this value, while, at present, a hadron collider on either continent would cost significantly more due to the cost of the required superconducting wire. Geoffrey Taylor of the University of Melbourne, who is chair of the International Committee for Future Accelerators, says that CERN has the major benefit of magnet expertise and high-energy collider development and operation, in addition to already having the multi-billion-dollar accelerator infrastructure required for the project. “The value of this infrastructure at CERN outweighs the cost of the tunnel; on the other hand, the Chinese proposal has a lower cost of tunneling but lacks the immense infrastructure and expertise necessary for the hadron collider.”

Taylor says that whilst it is essential that CERN maintains its pre-eminent position, having competition from Asia with the potential for major investment would be beneficial for the field as a whole because Western investment in future machines may well remain at current levels. There are also broader cultural factors to be considered, says Quigg: “CERN has earned an exemplary reputation for inclusiveness and openness, which go hand in hand with scientific excellence. Any region, nation, and institution that aims to host a world-leading instrument must strive for a similar environment.”

For theorist Gerard ’t Hooft, who shared the 1999 Nobel Prize in Physics for elucidating the quantum structure of electroweak interactions, the physics target of a 100 km collider is far more important than its location. It is not obvious, in view of our present theoretical understanding, whether or not a 100 km accelerator will be able to enforce a breakthrough, he says. “Most theoreticians were hoping that the LHC might open up a new domain of our science, and this does not seem to be happening. I am just not sure whether things will be any different for a 100 km machine. It would be a shame to give up, but the question of whether spectacular new physical phenomena will be opened up and whether this outweighs the costs, I cannot answer. On the other hand, for us theoretical physicists the new machines will be important even if we can’t impress the public with their results.”

Profound discoveries

Experimentalist Joe Incandela of the University of California in Santa Barbara, who was spokesperson of the CMS experiment at the time of the Higgs-boson discovery, believes that a post-LHC collider is needed for closure – even if it does not yield new discoveries. “While such machines are not guaranteed to yield definitive evidence for new physics, they would nevertheless allow us to largely complete our exploration of the weak scale,” he says. “This is important because it is the scale where our observable universe resides, where we live, and it should be fully charted before the energy frontier is shut down. Completing our study of the weak scale would cap a short but extraordinary 150 year-long period of profound experimental and theoretical discoveries that would stand for millennia among mankind’s greatest achievements.”