The eighth Large Hadron Collider Physics (LHCP) conference, originally scheduled to be held in Paris, was held as a fully online conference from 25 to 30 May. To enable broad participation, the organisers waived the registration fee, and, with the help of technical support from CERN, hosted about 1,300 registered participants from 56 countries, with attendees actively engaging via Zoom webinars. Even a poster session was possible, with 50 junior attendees from all over the world presenting their work via meeting rooms and video recordings. The organisers must be complimented for organising a pioneering virtual conference that succeeded in bringing the LHC community together, in larger and more diverse numbers than at previous editions.

LHCP20 presentations covered a wide assortment of topics and several new results with significantly enhanced sensitivity than was previously possible. These included both precision measurements with excellent potential to uncover discrepancies that can be explained only by beyond the Standard Model (SM) physics and direct searches using innovative techniques and advanced analysis methods to look for new particles.

The first observation of the combined production of three massive vector bosons was reported by CMS

The first observation of the combined production of three massive vector bosons (VVV with V = W or Z) was reported by the CMS experiment. In the nearly 40 years that have followed the discovery of the W and Z boson, their properties have been measured very precisely, including via “diboson” measurements of the simultaneous production of two vector bosons. However, “triboson” simultaneous production of three massive vector bosons had eluded us so far, as the cross sections are small and the background contributions are rather large. Such measurements are crucial to undertake, both to test the underlying theory and to probe non-standard interactions. For example, if new physics beyond the SM is present at high mass scales not far above 1 TeV, then cross section measurements for triboson final states might deviate from SM predictions. The CMS experiment took advantage of the large Run 2 dataset and machine learning techniques to search for these rare processes. Leveraging the relatively background-free leptonic final states, CMS collaborators were able to combine searches for different decay modes and different types of triboson production (WWW, WWZ, WZZ and ZZZ) to achieve the first observation of combined heavy triboson production (with an observed significance of 5.7 standard deviations) and at the same time evidence for WWW and WWZ production with observed significances of 3.3 and 3.4 standard deviations, respectively. While the results obtained so far are in agreement with SM predictions, more data is needed for the individual measurements of the WZZ and ZZZ processes.

Four-top-quark production

The first evidence for four-top-quark production was announced by ATLAS. The top-quark discovery in 1995 launched a rich programme of top-quark studies that includes precision measurements of its properties as well as the observation of single-top-quark production. In particular, since the large mass of the top quark is a result of its interaction with the Higgs field, studies of rare processes such as the simultaneous production of four top quarks can provide insights into properties of the Higgs boson. Within the SM, this process is extremely rare, occurring just once for every 70 thousand pairs of top quarks created at the LHC; on the other hand, numerous extensions of the SM predict exotic particles that couple to top quarks and lead to significantly higher production rates. The ATLAS experiment performed this challenging measurement using the full Run-2 dataset using sophisticated techniques and machine-learning methods applied to the multilepton final state to obtain strong evidence for this process. The observed signal significance was found to be 4.3 standard deviations, in excess of the expected sensitivity of 2.4, assuming SM four-top-quark-production properties. While the measured value of the cross section was found to consistent with the SM prediction within 1.7 standard deviations, the data collected during Run 3 will shed further light on this rare process.

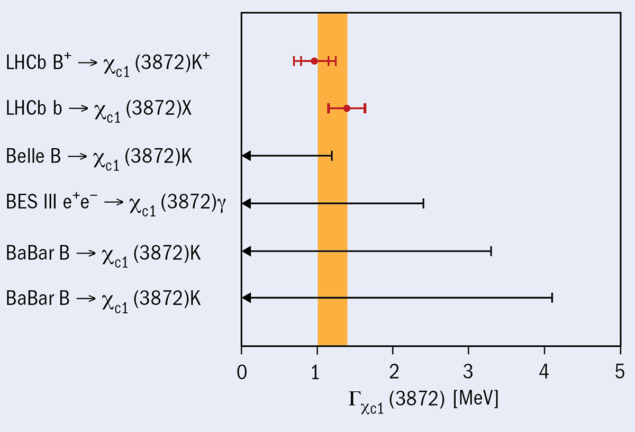

The LHCb collaboration presented, with unprecedented precision, measurements of two properties of the mysterious X(3872) particle. Originally discovered by the Belle experiment in 2003 as a narrow state in the J/ψπ+π− mass spectrum of B+→J/ψπ+π−K+ decays, this particle has puzzled particle physicists ever since. The nature of the state is still unclear and several hypotheses have been proposed, such as its being an exotic tetraquark (a system of four quarks bound together), a two-quark hadron, or a molecular state consisting of two D mesons. LHCb collaborators reported the most precise mass measurement yet, and measured, for the first time, and with 5 standard-deviations significance, the width of the resonance (see LHCb interrogates X(3872) line-shape). Though the results favour its interpretation as a quasi-bound D0D*0 molecule, more data and additional analyses are needed to rule out other hypotheses.

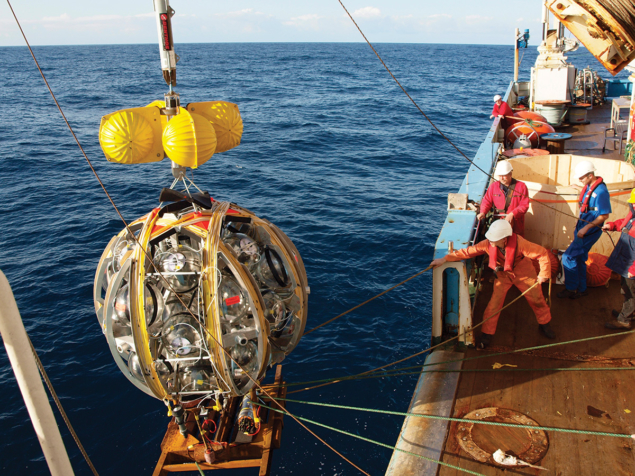

Antideuterons could be produced during the annihilation or decay of neutralinos or sneutrinos

The ALICE collaboration presented a first measurement of the inelastic low-energy antideuteron cross section using p-Pb collisions at a centre-of-mass energy per nucleon–nucleon pair of 5.02 TeV. Low-energy antideuterons (composed of an antiproton and an antineutron) are predicted by some models to be a promising probe for indirect dark-matter searches. In particular, antideuterons could be produced during the annihilation or decay of neutralinos or sneutrinos, which are hypothetical dark-matter particles. Contributions from cosmic-ray interactions in the low-energy range below 1-2 GeV per nucleon are expected to be small. ALICE collaborators used a novel technique that utilised the detector material as an absorber for antideuterons to measure the production and annihilation rates of low energy antideuterons. The results from this measurement can be used in propagation models of antideuterons within the interstellar medium for interpreting dark-matter searches, including intriguing results from the AMS experiment. Future analyses with higher statistics data will improve the modelling as well as extend these studies to heavier antinuclei.

The above are just a few of the many excellent results that were presented at LHCP2020. The extraordinary performance of the LHC coupled with progress reported by the theory community, and the excellent data collected by the experiments, has inspired LHC physicists to continue with their rich harvest of physics results despite the current world crisis. Results presented at the conference showed that huge progress has been made on several fronts, and that Run 3 and the High-Luminosity LHC upgrade programme will enable further exploration of particle physics at the energy frontier.