Space, the ultimate scientific frontier, is being opened up by a new generation of satellite-borne precision experiments, many of which use technology perfected in generations of particle physics studies. A workshop organized by CERN and the European Space Agency looked at the prospects for the experiments and for the underlying science.

Physics was born with ancient feet firmly on the ground, but late in the 19th century the term “astrophysics” crept into use to define the newer quest to understand extra-terrestrial mechanisms as well as terrestrial ones.

At the turn of the millennium a new dictionary term, “cosmophysics”, might have been coined to describe the quest to understand the universe at large as well as its individual components.

In the past 20 years, as the mechanisms of the Big Bang have become increasingly understood, particle physics and cosmology have become inextricably linked. At the same time, new developments in space technology have enabled new experiments, such as AMS and GLAST, to be carried aloft, high above the stifling blanket of the Earth’s atmosphere. These provide new observations and measurements that have increased our understanding considerably.

As well as the physics involved, these studies call for a range of technological expertise to mount precision experiments under harsh conditions.

This development was underlined in a workshop entitled “Fundamental Physics in Space”, which was organized jointly by CERN and the European Space Agency (ESA), and held at CERN on 5-7 April. Although laboratory and space physics are developing along several parallel avenues, the meeting provided a valuable but rare opportunity for laboratory and space physicists to compare notes and discuss topics of common interest.

The workshop, which was initiated bythe Board of the Joint Astrophysics Division of the European Physical Society and the European Astronomical Society, followed on from the May 1998 decision by both ESA and CERN to create working groups to study joint activities.

Opening the summary talks at the meeting, CERN director-general Luciano Maiani pointed to the growing overlap between particle and space physics. Recently, both subjects have underlined the important role played by the most invisible aspect of the universe – the vacuum.

ESA director-general Antonio Rodotà recalled the pioneer work carried out in the 1970s that pointed out the need for opening up the physics exploration of space, particularly for precision measurements and the deeper exploration of gravity, which now provide cornerstone missions for the new millennium.

Cosmology is flourishing

What Chandrasekhar once called “the graveyard of astronomy” is now a flourishing field, commented Lodewijk Woltjer of the St Michel Observatory and former ESO director-general, as he commenced his summary of the cosmology sessions. Indeed, to hear the wealth of science presented and the number of new instruments in the pipeline, it looks like its future is bright.

Type 1A supernovae have long been used as “standard candles” to measure distances in space. A measure of their apparent luminosity gives a measure of their distance. However, the method is prone to many errors and different teams can get very different results. Gustav Tammann of Basle explained how, with new corrections for decline rate and colour, the Hubble constant becomes 59 ± 7, corresponding to the universe being 17 billion years old. “Photometry with the Hubble Space Telescope is working at the limits of what is possible, the main problem being the background,” he said.

Another cosmological parameter is omega, the ratio of matter in the universe to the critical level needed to halt the expansion of the universe. The inflation model of the Big Bang predicts that omega should be exactly equal to one – that the universe is “flat”.

That the universe is expanding forever seems to have a certain philosophical appeal for some people. However, I have never really understood this, because our fate won’t be very much different!

Lodewijk Woltjer

Woltjer summarized current results that suggest that contributions of both radiating and dark matter to the density of the universe give an omega of around one-third. A non-zero cosmological constant or some new form of energy would be needed to make up the difference.

Sidney Bludman from DESY and Penn State showed how quintessence, or negative pressure, could solve this problem. A consequence would be to increase the expansion of the universe with time – an accelerating universe. A non-zero cosmological constant has also been suggested by studies of distant supernovae.

This picture was confirmed by Jean-Loup Puget of Orsay in his round-up of observations of the cosmic microwave background radiation. Results from the Boomerang experiment announced earlier this year give omega as one (±0.3) and suggest a non-zero cosmological constant. Puget looked forward to results from ESA’s Planck satellite, which is due to be launched in 2007.

“That the universe is expanding forever seems to have a certain philosophical appeal for some people,” said Woltjer. “However, I have never really understood this, because our fate won’t be very much different!”

Imaging dark matter

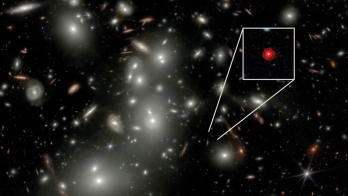

The most exciting cosmology news was that gravitational lensing has now really come of age. Cosmic shear raised a significant amount of interest. Peter Schneider of Bonn showed how gravitational weak lensing can reveal the invisible. His team has discovered a “dark clump” of 1015solar masses (assuming a redshift of 0.8) with no optical counterpart, which he believes is the first-ever lensing-detected dark matter cluster.

Schneider was waiting for the results of infrared observations of the region. If confirmed, this technique will have enormous implications for cosmology. “The future is very encouraging,” he said. Indeed, he announced another area where gravitational weak lensing is showing results – measuring the effect of lensing across a large field can help to map the dark matter making up so-called galactic halos. Observations by the Sloan Digital Survey have shown no sign of halo truncation at distances of up to 150 kpc. In fact, says Schneider, galaxies probably don’t really have halos of dark matter at all; what is seen is just a correlation between the galaxies’ positions and the overall large-scale dark matter distribution.

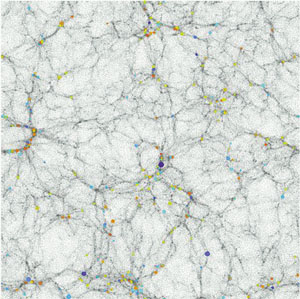

This view is supported by Carlos Frenk of Durham. With the Virgo Consortium, he has carried out simulations of the evolution of matter and dark matter in the universe. His modelling shows dark matter evolving in enormous filaments with galaxies forming at high-density nodes.

Woltjer reminded participants that a lot of assumptions are made before carrying out such simulations, in particular regarding the relationship between gas, dust and stellar objects. “We are still a long way from constructing the universe from first principles,” he commented.

Frenk was optimistic about the future. “Enormous progress has been made in instrumentation over recent years. If the 1980s belonged to the theorists, then the late 1990s most certainly belonged to the experimentalists,” he said.

Future telescopes

The next-generation space telescope (NGST), still on the drawingboard, should contribute. At redshifts of greater than 5, only 5% of stars have formed. However, “this is a very interesting fraction of stars,” said Frenk. He believes that the NGST will detect primeval galaxies at redshifts of up to 10.

Peter Shaver of ESO reviewed the recent progress in detection techniques, in particular for observations of the first galaxies and quasars. The discovery of the Lyman alpha break in the spectrum of high-redshift objects has caused a revolution over the last five years or so, enabling more and more high-redshift galaxies to be recognized. “We are closing in on the reionization epoch,” he said. In his opinion, the furthest galaxy discovered to date is at a redshift of 5.74. He believes that claims for galaxies at a redshift of 6.68 are yet to be proven.

With the NGST it will be interesting to look at the evolution of galaxies at high redshift, and also the quasar epoch around redshift 2. NGST will be launched in around 2010. Another useful tool for studying early galaxies, which is to be launched in 2007, is ESA’s Far Infrared and Submillimetre Telescope (FIRST), explained Reinhard Genzel of Garching. These space observations will be paralleled by the ALMA ground-based infrared array.

Gravitation

Moving on to another type of radiation altogether, Martin Huber from ESTEC summarized the session on gravitational wave astronomy. Gravity waves are ideal probes of the universe because they interact very weakly and carry huge energies. Their existence has long been confirmed by measurements of the energy loss from binary star systems. However, they have never been detected directly.

Besides the classic resonance detectors, current ground-based detectors include the GEO 600 and TAMA interferometers. The next generation of ground-based detectors, Virgo and Ligo, will improve in accuracy by a factor of 10. “I am confident that we will detect gravity waves within the next decade,” said Huber. “However, it will be very difficult to pinpoint the sources.”

Most of the sources within the frequency range of the next detectors will be transient. Bernard Schutz of the Einstein Institute, Potsdam explained that the ideal sources are compact, such as black holes, and repeating, such as rotating binary systems. Ground detectors can only observe at frequencies above 1 Hz because the Earth’s background noise cannot be screened. Events in this frequency range are rare or weak, such as supernova collapses and compact binary spindown.

The future is the ESA cornerstone mission, LISA, which is to be built jointly with NASA. This interferometer in space will observe in the low-frequency window below 1 Hz, where emission occurs from many known strong sources, such as massive black holes and compact binary star systems.

An afternoon gravitation session served as a public presentation of the mission. Karsten Danzmann of Hannover gave a taster of the physics to come. “More than 90% of the universe is dark,” he said. “If part of the dark matter clumps, then gravitational wave detectors may be the only way to see it directly.”

Another exciting area is the stochastic gravitational wave background. “Just as the cosmic microwave background radiation shows us the universe when it was 300 000 years old, a gravitational wave background would be a picture of the Big Bang itself – when the universe was perhaps just 10-24 s old,” said Danzmann. The planned LISA launch date is in 2010. “It is a completely new field,” said Huber. “We should expect the unexpected.”

The other session on gravitation showed how space experiments could really test the physics of gravity. In particular, Pierre Touboul of ONERA and Nicholas Lockerbie of Glasgow talked about two new satellite experiments that are planned to test the equivalence principle, or the universality of free fall. The French team is working on mscope, which is to be launched in around 2003. It hopes to test the equivalence principle to 1 part in 1015– an improvement of three orders of magnitude on current experiments. The ESA/NASA STEP mission could be launched in around 2005 and will test to 1 part in 1018. “String theory gives a natural explanation of why gravity is dynamic without assuming it,” said Thibault Damour of IHES, Bures-sur-Yvette. “In theory, not only is space not rigid but there are also coupling constants that imply a violation of the equivalence principle.”

Accelerators in the sky

There is apparently no end to the mysteries of the heavens – our lifelong acquaintance with puny, everyday mechanisms makes us ill-equipped to understand the mighty forces at work in the depths of the universe.

New telescopes peering into the depths of space from fresh vantage points reveal sourcespumping out energy at unimaginable rates. Many of these, whatever they emit and however they are seen, are poorly understood and can be conveniently grouped under the heading “extreme sources”. In his summary, P L Biermann of Bonn said: “the sky contains all this and a lot more”.

Jewels in the intense source crown are the mysterious gamma-ray bursts – now an everyday occurrence. Attempts to explain how so much energy can be released focus on extremely relativistic fireballs. Other fireballs – active galactic nuclei, black holes, etc – are also held to be responsible for X- and gamma-ray fireworks.

While electromagnetic radiation points back to its source, cosmic rays, tangled by intergalactic magnetic fields, do not reveal where they come from. The tip of the mystery cosmic-ray iceberg is now 24 cosmic-ray events that, in principle, should never be seen – their energy is beyond that “allowed” by interactions with the all-pervading cosmic microwave background. How can such extreme energies be produced and how can they elude the all-pervading background radiation?

Cosmic rays – once the point of entry for particle physics – are now a new point of departure. The universe has to contain “radiogalaxy hot spots” – cosmic accelerators larger than a typical galaxy, to whirl charged particles to such “astronomical” velocities.

Dark matter

That most of the universe is composed of invisible dark matter is perhaps the ultimate physics paradox. Attempts to uncover dark matter and to resolve this paradox are a major theme in astrophysics research, both theoretical and experimental.

At the CERN/ESA meeting, Alvaro de Rujula of CERN summarized the dark matter sessions, where direct searches for exotic particles, such as axions (“aaxions”, according to de Rujula), have yet to turn up positive evidence. More promising is the area of gravitational lensing. Objects can be invisible but still exert a gravitational pull, which can disturb visible light in transit.

One specialist area is gravitational microlensing, which is looking for the effects of otherwise invisible objects as they cross the line of sight of a more distant luminous object. Interpreting this mass of results is still difficult, but de Rujula suggested that, while dark matter massive astronomical compact halo objects (MACHOs) are out of favour, weakly interacting massive particles (WIMPS) are coming in.

The DAMA (sodium iodide) detector at Gran Sasso has reported an annual signal variation that has been interpreted as possible evidence for galactic WIMP particles. Such a signal is not seen by the Cryogenic Dark Matter Search (CDMS) experiment at Stanford using silicon and germanium sensors.

This part of the programme also covered neutrino astronomy. As well as providing a new window on the universe, neutrino astrophysics has offered evidence for neutrino mixing, and therefore for non-zero neutrino mass. A new understanding of neutrinos would provide fresh light on the basic interactions of nature.

The limited seasonal and diurnal variation in solar neutrino signals provides important limits on neutrino-mixing mechanisms. The big Superkamiokande detector in Japan dominates the world data on extra-terrestrial neutrinos and has now intercepted 17 terrestrial neutrinos fired from the KEK laboratory, some 250 km distant – the first time that terrestrial neutrinos have been tracked over such a long path.

Extra-terrestrial neutrino physics “has a long past and a brilliant future”, ventured de Rujula.

In particle physics the continual demands to handle and analyse increased data rates and to attain greater precision provides a fertile ground for detector innovation.

Michel Spiro of Saclay, chairman of CERN’s LEP

Experiments Committee, summarized the session covering the

use and potential use in space experiments of instrumentation

developed for high-energy

physics.

Innovations in instrumentation

Detectors in space “see” X-ray and gamma radiation before it is absorbed by the atmosphere. Highly sensitive cryogenic X-ray detectors will be a useful new addition to the sensor armoury. The massive R&D programmes for the major experiments at CERN’s future LHC collider have already yielded an impressive array of techniques – pixel detectors as “eyes” and scintillators for energy measurement – which could go on to provide useful opportunities. Time projection chambers are another means of providing remarkable images of physics beyond the atmosphere.

As well as the detectors, read-out mechanisms too are developing quickly. Sensors and chips can be dissociated and exploit complementary technologies. Photomultiplier technology has received considerable impetus from experiments studying neutrinos.

The LHC experiments are also blazing new trails in data acquisition and handling (see “Grid” feature) and in semiconductor technology.

Spiro highlighted several new flagship space-borne experiments exploiting particle physics know-how – the AMS detector for the Space Station and the GLAST telescope, which is due for launch in 2005, while the Supernova Accelerator Probe (SNAP) and Extreme Universe Space Observatory (EUSO) proposals could continue this tradition.

Gert Viertel of ETH Zurich summarized the current instrumentation of space. Here the requirement for very high timing accuracy has driven the development of precise atomic clocks. Pixel detectors already have a distinguished track record of astronomical measurements. Superconducting tunnel junctions are poised to begin a new chapter of space research.

Away from the detectors, the highly successful GEANT simulation software developed for particle physics is finding increasing use in astrophysics and astronomy.

While particle physics is a fertile breeding ground for new detector technology, it is not the only variable in the equation. Space borne experiments, requiring years of fruitful operation with minimal or no manual maintenance and intervention, have their special requirements.

This new contact between particle physicists and cosmophysicists is already paying dividends on the instrumentation front. CERN’s “recognized experiment” status now covers a range of studies that do not use accelerator beams, but ensure that the laboratory remains a focal point of this physics. At the start of the millennium, the rapidly maturing field of cosmophysics is poised to make a major contribution to our knowledge and understanding of the universe.