The most striking recent development in cosmology has come from supernova studies, which reveal how the expansion rate of the universe changes with time. Rather than slowing down, the universe is expanding faster as time goes by. Pedro G Ferreira of CERN explains how theorists are faced with the dilemma of living with the controversial cosmological constant or having to postulate a new form of matter.

With no ruler in the sky, astronomers have to locate visible cosmic milestones to help them to measure distances. These distance indicators can be visible objects, such as stars or galaxies, or effects, such as explosions, gravitational lensing or the thermal scattering of background radiation in cluster cores.

As the light from these distant milestones rushes towards us, its wavelength is stretched (redshifted) by the expansion of the universe. In the classic picture this expansion was thought to be decelerating owing to the pull of gravity.

In 1929 Edwin Hubble showed that the redshift and distance of nearby galaxies obeyed a linear law. From the assumption that the universe was expanding, one could show that Hubble’s comparison gave a direct estimate of the expansion of the universe and, as a consequence, a measurement of its age.

The difficulties of these measurements are reflected in the result that Hubble obtained: the universe was only a thousand million years old younger than most astrophysical objects. Current measurements lead to a generally accepted age of 15 gigayears, although different methods still give different values.

Cosmic fireworks

Massive stars are doomed to a violent death as supernovae. The immense gravitational crush inside such a star heats up their core, tripping new thermonuclear switches to release fusion energy. When its nuclear fuel is spent, the star can no longer resist the remorseless pull of gravity and so it implodes, cooking a stew of heavy nuclei and compressing its component atoms into mere neutrons.

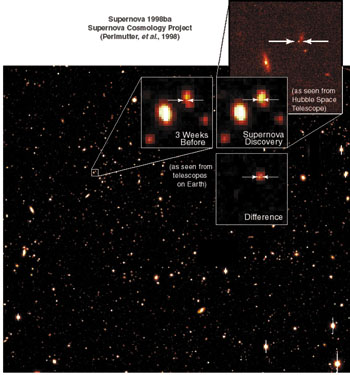

Like a rubber ball that has been squeezed, the remnant of the star springs back in a huge shockwave that flings nuclear residue far out into space and releases a characteristic light in the sky. The duration and brightness of nearby supernovae is well known, so by comparing the observed variation in brightness (the light curves) of distant supernovae to those that are closer gives accurate measurements of their distances.

The drawback in this method is that these events are rare. Only a few supernovae occur every thousand years in a typical galaxy,

so finding them means looking at large numbers of galaxies at a time.

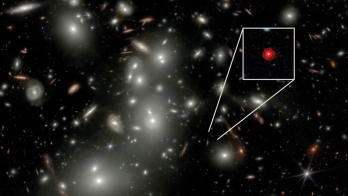

The Supernova Cosmology Project and the HighZ Supernovae Project have developed a method to look for and then follow supernovae explosions. These groups carefully scan large patches of the sky for sudden supernova flashes, then carefully monitor their evolution with optical telescopes, obtaining accurate measurements of the light curve and spectra.

In January 1998 the Supernova Cosmology Project presented its 1997 harvest the analysis of 42 newly discovered distant supernovae. To everyone’s surprise, these supernovae looked dimmer than expected in the standard, decelerating model of the universe. Instead of slowing down, the expansion of the universe appeared to be speeding up. The results were confirmed by the rival HighZ Supernova team and have become an essential ingredient of current cosmological model building.

The systematic analysis of these results has been thorough, but there a few sceptics still remain. Two main criticisms are raised: first, distant supernovae could look dimmer because intervening dust scatters the light. Second, are we completely sure that these distant supernovae explode in the same way as those that we see closer to us?

Both groups have set up campaigns to understand supernovae better, and results at different wavelengths will map out the characteristics of intergalactic dust with great precision.

Accelerating universe

With an accelerating universe, physicists had some explaining to do. The acceleration or deceleration is dictated by the sum of the energy density of the matter in the universe, and the pressure exerted by the matter in all three directions. If this sum is positive, the universe decelerates; if it is negative, the universe accelerates (the energy density is always positive).

For example, for a universe consisting mostly of ordinary massive §particles, the pressure is essentially negligible. Meanwhile, for a universe dominated by photons or massless neutrinos, the pressure is equal to one-third of the energy density. For both types of matter the pressure is not negative. Therefore, if they are dominant, the universe will decelerate.

An accelerating universe needs a negative pressure to counterbalance the energy density. The standard ploy is the cosmological constant. Introduced by Einstein in his general theory of relativity to counterbalance the pull of gravity and therefore lead to a static universe, the cosmological constant has been on the one hand a convenient fix for the Big Bang cosmology when the theory doesn’t fit the data, but on the other hand one of the major conceptual problems in particle physics and cosmology.

The problem has been stated many times: if we add up the vacuum fluctuations from all of the various quantum fields we know of, we naturally obtain a cosmological constant with an energy density of 1029eV4. With such a large value, Einstein’s theory would essentially predict that our universe is flying apart with absolutely no possibility of forming galaxies, stars or planets.

This was always an embarrassment for theorists, but if the cosmological constant were zero, there was always the hope that they had “nothing” to worry about.

There have been many attempts to get rid of the cosmological constant. One of the more promising possibilities is that supersymmetry an as yet unobserved symmetry between bosons and fermions may lead to an exact cancellation between all of the various contributions to the vacuum energy density. Suggestions abound, but a compelling model has yet to emerge that could explain why the cosmological constant is so small.

A highly speculative possibility relies on a particular feature of the quantum theory of gravity. Fluctuations in the fabric of space time modify the local topology, creating a quantum foam of holes and handles (wormholes). The overall effect is to drive the cosmological constant to zero.

The problem of how to incorporate the cosmological constant into a sensible theory of matter remains unresolved and, if anything, has become even harder to tackle with the supernova results. Until now an exact cancellation was needed so that one could argue that some fundamental symmetry would forbid it to be anything other than zero. However, with the discovery of an accelerating universe, a very special cancellation is necessary a cosmic coordination of very big numbers to add up to one small number. If we are indeed measuring a constant energy density with the supernova results, it is more at the level of 10-3eV4.