Solving the challenges of sharing, reproducing and reusing results in particle physics seems more feasible than ever thanks to recent technological developments.

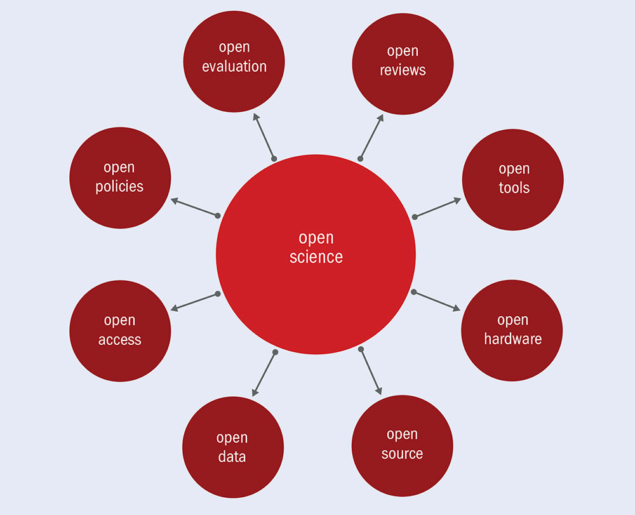

The goal of practising science in such a way that others can collaborate, question and contribute – known as “open science” – long predates the web. One could even argue that it began with the first academic journal 350 years ago, which enabled scientists to share knowledge and resources to foster progress. But the web offered opportunities way beyond anything before it, quickly transforming academic publishing and giving rise to greater sharing in areas such as software. Alongside the open-source (Inspired by software), open-access (A turning point for open-access publishing) and open-data (Preserving the legacy of particle physics) movements grew the era of open science, which aims to encompass the scientific process as a whole.

Today, numerous research communities, political circles and funding bodies view open science and reproducible research as vital to accelerate future discoveries. Yet, to fully reap the benefits of open and reproducible research, it is necessary to start implementing tools to power a more profound change in the way we conduct and perceive research. This poses both sociological and technological challenges, starting from the conceptualisation of research projects, through conducting research, to how we ensure peer review and assess the results of projects and grants. New technologies have brought open science within our reach, and it is now up to scientific communities to agree on the extent to which they want to embrace this vision.

Particle physicists were among the first to embrace the open-science movement, sharing preprints and building a deep culture of using and sharing open-source software. The cost and complexity of experimental particle physics, making complete replication of measurements unfeasible, presents unique challenges in terms of open data and scientific reproducibility. It may even be considered that openness itself, in the sense of having an unfettered access to data from its inception, is not particularly advantageous.

Take the existing data-management policies of the LHC collaborations: while physicists generally strive to be open in their research, the complexity of the data and analysis procedures means that data become publicly open only after a certain embargo period that is used to assess its correctness. The science is thus born “closed”. Instead of thinking about “open data” from its inception, it is more useful to speak about FAIR (findable, accessible, interoperable and reusable) data, a term coined by the FORCE11 community. The data should be FAIR throughout the scientific process, from being initially closed to being made meaningfully open later to those outside the experimental collaborations.

True open science demands more than simply making data available: it needs to concern itself with providing information on how to repeat or verify an analysis performed over given datasets, producing results that can be reused by others for comparison, confirmation or simply for deeper understanding and inspiration. This requires runnable examples of how the research was performed, accompanied by software, documentation, runnable scripts, notebooks, workflows and compute environments. It is often too late to try to document research in such detail once it has been published.

True open science demands more than simply making data available

FAIR data repositories for particle physics, the “closed” CERN Analysis Preservation portal and the “open” CERN Open Data portal emerged five years ago to address the community’s open-science needs. These digital repositories enable physicists to preserve, document, organise and share datasets, code and tools used during analyses. A flexible metadata structure helps researchers to define everything from experimental configurations to data samples, from analysis code to software libraries and environments used to analyse the data, accompanied by documentation and links to presentations and publications. The result is a standard way to describe and document an analysis for the purposes of discoverability and reproducibility.

Recent advancements in the IT industry allow us to encapsulate the compute environments where the analysis was conducted. Capturing information about how the analysis was carried out can be achieved via a set of runnable scripts, notebooks, structured workflows and “containerised” pipelines. Complementary to data repositories, a third service named REANA (reusable analyses) allows researchers to submit parameterised computational workflows to run on remote compute clouds. It can be used to reinterpret preserved analyses but also to run “active” analyses before they are published and preserved, with the underlying philosophy that physics analyses should be automated from inception so that they can be executed without manual intervention. Future reuse and reinterpretation starts with the first commit of the analysis code; altering an already-finished analysis to facilitate its eventual reuse after publication is often too late.

Full control

The key guiding principle of the analysis preservation and reuse framework is to leave the decision as to when a dataset or a complete analysis is shared, privately or publicly, in the hands of the researchers. This gives the experiment collaborations full control over the release procedures, and thus fully supports internal processing and review protocols before the results are published on community services, such as arXiv, HEPData and INSPIRE.

The CERN Open Data portal was launched in 2014 amid a discussion as to whether primary particle-physics data would find any use outside of the LHC collaborations. Within a few years, the first paper based on open data from the CMS experiment was published (see Preserving the legacy of particle physics).

Three decades after the web was born, science is being shared more openly than ever and particle physics is at the forefront of this movement. As we have seen, however, simple compliance with data and software openness is not enough: we also need to capture, from the start of the research process, runnable recipes, software environments, computational workflows and notebooks. The increasing demand from funding agencies and policymakers for open data-management plans, coupled with technological progress in information technology, leads us to believe that the time is ripe for this change.

Sharing research in an easily reproducible and reusable manner will facilitate knowledge transfer within and between research teams, accelerating the scientific process. This fills us with hope that three decades from now, even if future generations may not be able to run our current code on their futuristic hardware platforms, they will be at least well equipped to understand the processes behind today’s published research in sufficient detail to be able to check our results and potentially reveal something new.