A second ring is now being planned for the Beijing Electron-Positron Collider (BEPC) at the Institute of High Energy Physics (IHEP). The precision measurements and successful completion of a run collecting 50 million J/psi particles at BEPC, as well as the planned upgrade to BEPC II, have attracted a lot of attention.

The earlier upgrade design was based on multiple bunches and bunch trains in a “pretzel” orbit at the existing BEPC storage ring, which would have increased the luminosity ten-fold. (The existing BEPC contains adjacent counter-rotating electron and positron beams in a single ring, which is the standard approach.)

In parallel, an upgrade of the Beijing Spectrometer, BES III, was designed to handle high event rates and to reduce systematic errors. The Chinese funding agency approved these upgrades.

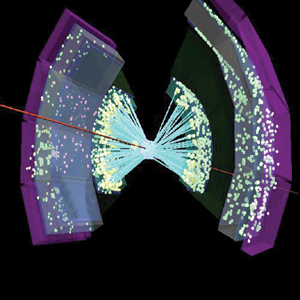

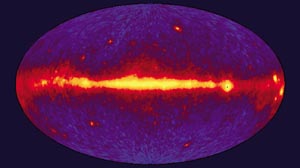

Many recent physics results have underlined the importance of high-precision measurements. In particular, precision measurements in the tau-charm region have a unique advantage for many interesting physics studies, such as searches for glueballs (particles without quarks) and quark-gluon hybrids, light hadron spectroscopy, the J/psi family, and excited baryons.

Special workshops were held recently at SLAC and Cornell in the US to discuss this physics, and there are proposals to build a new machine at SLAC (PEP-N) and to lower the beam energy of Cornell’s CESR ring to run in this energy region. The interest of a number of other laboratories in this physics underlines its importance.

To extend its physics potential and to be more competitive, IHEP recently modified the BEPC II design to a double ring. This significantly improves the expected performance with a calculated luminosity of 1033 cm-2 s-1 at a beam energy of 1.55 GeV.

An international review on the feasibility of the new design was held on 2-6 April in Beijing. It was conducted in two partially overlapping segments, the first dealing with the accelerator collider programme and the second with the detector for the upgraded facility. Two separate reports were prepared under the chairmanship of Alex Chao of SLAC and Michel Davier of Orsay. Former SLAC director W K H Panofsky of SLAC summarized: “After much excellent work leading to the feasibility study report, there is no basic reason why a luminosity greater than 3 x 1032 cm-2 s-1 or even 1033 could not be reached. The review committee recommended strongly the double-ring option.”

The double-ring design requires a new storage ring of slightly smaller radius to be built adjacent to the existing storage ring. The two halves of the new ring and of the old ring will be linked at two interaction points to form two identical rings, each made up of one half of the old ring and one half of the new. Each new ring can be filled with up to 93 bunches with maximum beam current of 1.1 A at a beam energy of 1.55 GeV.

The beams will collide at the south interaction point with a horizontal crossing angle of 11 mrad. To reduce the beam length, superconducting micro-beta quadrupoles will be installed near the interaction region. Superconducting cavities with a 499.8 MHz radiofrequency system will further reduce the bunch length and also provide much higher power. Low impedance vacuum chambers will be used. The upgrade of the linac injector allows full-energy injection up to 1.89 GeV and a positron injection rate of 50 mA/min. The instrumentation and control system will also be upgraded. The calculated luminosity at a beam energy of 1.55 GeV is 1033 cm-2 s-1, which is an improvement of two orders of magnitude.

To retain dedicated synchrotron radiation running in the existing outer ring, a bridge will connect the two half outer rings at the north interaction point. At the collision point of the south interaction region, special dipole coils will be installed in the superconducting quadrupoles to keep the beam in the outer ring during dedicated synchrotron radiation running. The beam current during dedicated synchrotron radiation running could be higher than 150 mA at 2.8 GeV.

Compared with the pretzel design using a single ring, the double-ring design is much more competitive in performance, has fewer technical risks, and costs only about 50% more.

The proposed design of the upgraded Beijing Spectrometer (BES III) has also been improved significantly to match the high performance of the double-ring design. Starting from the interaction point, the BES III detector consists of a scintillating fibre detector; a main drift chamber; time-of-flight counters; a barrel electromagnetic calorimeter; an end-cap electromagnetic calorimeter; a superconducting magnet; and a muon detector. The superconducting magnet, providing 1.2 Tesla, has a length of 3.2 m, an inner radius of 1.05 m and an outer radius of 1.45 m.

The scintillating fibre detector provides trigger signals and reduces cosmic-ray background. It consists of two superlayers, with two layers of scintillating fibres in each, that are read out at both ends by avalanche photodiodes via clear fibres. The position resolution per superlayer is expected to be 80 µm radially and 1 mm along the beam axis.

The main drift chamber (length 1906 mm, inner radius 70 mm and outer radius 660 mm) consists of 36 layers with small cells and with aluminium-filled (1 µm) wire and helium-based gas to reduce multiple scattering. The single-wire resolution is expected to be better than 130 µm. The solid angle coverage for tracks going through all layers is 93%. To provide space for the superconducting micro-beta quadrupoles and to reduce the background, the end plates of the inner part will be step-shaped. The expected energy loss resolution is about 7%.

The time-of-flight counters consist of two layers of plastic scintillator with 72 pieces in azimuth per layer. The time resolution should be better than 65 ps. This will provide good kaon/pion differentiation up to 1.1 GeV. The barrel electromagnetic calorimeter is made of crystals with an energy resolution better than 2.5% at 1 GeV. The endcaps of the electromagnetic calorimeter are made of lead-scintillating fibres with an energy resolution of about 6% at 1 GeV.

An international workshop on the BES III detector on 13-15 October at IHEP, Beijing, will discuss the design and possible collaboration. New design ideas are welcome, as are international participation and contributions to the new detector.

The feasibility study report on BEPC II has been submitted to the Chinese funding agency. R&D work of key technologies is in progress. The design report should be finished by next spring, and construction should begin soon afterwards. BEPC will continue to run until spring 2005, after which there will be a long shutdown (about 9-10 months) for installation. The tuning of the new machine should start by spring 2006, and physics should be running by the end of 2006.