Particle physicists thrive on information. They first create information by performing experiments or elaborating theoretical conjectures. Then they convey it to their peers by writing papers that are disseminated in a preprint form long before publication. Keeping track of this information has long been the task of libraries at the larger laboratories, such as at CERN, DESY, Fermilab and SLAC, as well as being the focus of indispensable services including arXiv and those of the Particle Data Group.

It is household knowledge that the web was born at CERN, and every particle physicist knows about SPIRES, the place where they can find papers, citations and information about colleagues. However, not everyone knows that the first US web server and the first database on the web came about at SLAC with just one aim: to bring scientific information to the fingertips of particle physicists through the SPIRES platform. SPIRES was hailed as the first “killer” application of the then nascent web.

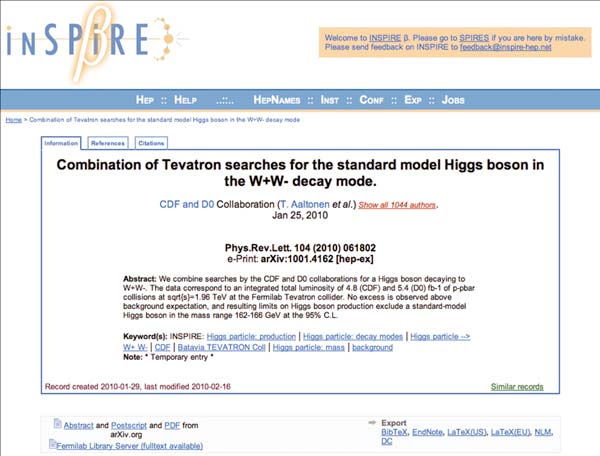

No matter how venerable, the information tools currently serving particle physicists no longer live up to expectations and information management tools used elsewhere in the world have been catching up with those of the high-energy physics community. The soon to be released INSPIRE service will bring state-of-the-art information retrieval to the fingertips of researchers in high-energy physics once more, not only enabling more efficient searching but paving the way for modern technologies and techniques to augment the tried-and-tested tools of the trade.

Meeting demand

The INSPIRE project involves information specialists from CERN, DESY, Fermilab and SLAC working in close collaboration with arXiv, the Particle Data Group and publishers within the field of particle physics. “We separate the work such that we don’t duplicate things. Having one common corpus that everyone is working on allows us to improve remarkably the quality of the end product,” explains Tim Smith, head of the User and Document Services Group in the IT Department at CERN, which is providing the Invenio technology that lies at the core of INSPIRE.

In 2007, many providers of information in the field came together for a summit at SLAC to see how physics-information resources could be enhanced. The INSPIRE project emerged from that meeting and the vision behind it was built from a survey launched by the four labs to evaluate the real needs of the community (Gentil-Beccot et al. 2008.). A large number of physicists replied enthusiastically, even writing reams of details in the boxes that were made available to input free text. The bulk of the respondents noted that the SPIRES and arXiv services were together the dominant resources in the field. However, they pointed out that SPIRES in particular was “too slow” or “too arcane” to meet their current needs.

INSPIRE responds to this directive from the community by combining the most successful aspects of SPIRES (a joint project of DESY, Fermilab and SLAC) with the modern technology of Invenio (the CERN open-source digital-library software). “SPIRES’ underlying software was overdue for replacement, and adopting Invenio has given INSPIRE the opportunity to reproduce SPIRES’ functionality using current technology,” says Travis Brooks, manager of the SPIRES databases at SLAC. The name of the service, with the “IN” from Invenio augmenting SPIRES’ familiar name, underscores this beneficial partnership. “It reflects the fact that this is an evolution from SPIRES because the SPIRES service is very much appreciated by a large community of physicists. It is a sort of brand in the field,” says Jens Vigen, head of the Scientific Information Group at CERN.

However, INSPIRE takes its own inspiration from more than just SPIRES and Invenio. In searching for a paper, INSPIRE will not only fully understand the search syntax of SPIRES, but will also support free-text searches like those in Google. “From the replies we received to the survey, we could observe that young people prefer to just throw a text string in a field and push the search button, as happens in Google,” notes Brooks.

This service will facilitate the work of the large community of particle physicists. “Even more exciting is that after releasing the initial INSPIRE service, we will be releasing many new features built on top of the modern platform,” says Zaven Akopov of the DESY library. INSPIRE will enable authors and readers to help catalogue and sort material so that everyone will find the most relevant material quickly and easily. INSPIRE will also be able to store files associated with documents, including the full text of older or “orphaned” preprints. Stephen Parke, senior scientist at the Fermilab Theory Department looks forward to these enhancements: “INSPIRE will be a fabulous service to the high-energy-physics community. Not only will you be able to do faster, more flexible searching but there is a real need to archive all conference slides and the full text of PhD theses; INSPIRE is just what the community needs at this time.”

Pilot users see INSPIRE already rising to meet these expectations, as remarked on by Tony Thomas, director of the Australian Research Council Special Research Centre for the Structure of Matter: “I tried the alpha version of INSPIRE and was amazed by how rapidly it responded to even quite long and complex requests.”

The Invenio software that underlies INSPIRE is a collaborative tool developed at CERN for managing large digital libraries. It is already inspiring many other institutes around the world. In particular, the Astrophysics Data System (ADS) – the digital library run by the Harvard-Smithsonian Center for Astrophysics for NASA – recently chose Invenio as the new technology to manage its collection. “We can imagine all sorts of possible synergies here,” Brooks anticipates. “ADS is a resource very much like SPIRES, but focusing on the astronomy/astrophysics and increasingly astroparticle community, and since our two fields have begun to do a lot of interdisciplinary work the tighter collaboration between these resources will benefit both user communities.”

Invenio is also being used by many other institutes around the world and many more are considering it. “In the true spirit of CERN, Invenio is an open-source product and thus it is made available under the GNU General Public Licence,” explains Smith. “At CERN, Invenio currently manages about a million records. There aren’t that many products that can actually handle so many records,” he adds.

Invenio has at the same time broadened its scope to include all sorts of digital records, including photos, videos and recordings of presentations. It makes use of a versatile interface that makes it possible, for example, to have the site available in 20 languages. Invenio’s expandability is being exploited to the full for the INSPIRE project where a rich set of back-office tools are being developed for cataloguers. “These tools will greatly ease the manual tasks, thereby allowing us to get papers faster and more accurately into INSPIRE,” explains Heath O’Connell from the Fermilab library. “This will increase the search accuracy for users. Furthermore, with the advanced Web 2.0 features of INSPIRE, users will have a simpler, more powerful way to submit additions, corrections and updates, which will be processed almost in real time”.

Researchers in high-energy physics were once the beneficiaries of world-leading information management. Now INSPIRE, anchored by the Invenio software, aims once again to give the community a world-class solution to its information needs. The future is rich with possibilities, from interactive PDF documents to exciting new opportunities for mining this wealth of bibliographic data, enabling sophisticated analyses of citations and other information. The conclusion is easy: if you are a physicist, just let yourself be INSPIREd!

• The INSPIRE service is available at http://inspirebeta.net/.