Michel Borghini, who passed away unexpectedly on 15 December 2012, was at CERN for more than 30 years. Born in 1934, Michel was a citizen of Monaco. He graduated from Ecole Polytechnique in 1955 and went on to obtain a degree in electrical engineering from Ecole Supérieure d’Electricité, Paris, in 1957. He then joined the group of Anatole Abragam at what was the Centre d’Etudes Nucléaires, Saclay, where he took part in the study of dynamic nuclear polarization that led to the development of the first polarized proton targets for use in high-energy physics experiments. It was here that he gained the experience that he was to develop at CERN, to the great benefit of experimental particle physics.

The basic aim with a polarized target is to line up the spins of the protons, say, in a given direction. In principle, this can be done by aligning the spins with a magnetic field but the magnitude of the proton’s magnetic moment is such that it takes little energy to knock them out of alignment; thermal vibrations are sufficient. Even at low temperatures and reasonably high magnetic fields, the polarization achieved by this “brute force” method is small: only 0.1% at a temperature of 1 K and in an applied magnetic field of 1 T. To overcome this limitation, dynamic polarization exploits the much larger magnetic moment of electrons by harnessing the coupling of free proton spins in a material with nearby free electron spins. At temperatures of about 1 K, the electron spins are almost fully polarized in an external magnetic field of 1 T and the application of microwaves of around 70 GHz induces resonant transitions between the spin levels of coupled electron–proton pairs. The effect is to increase the natural, small proton polarization by more than two orders of magnitude. The polarization can be reversed with a slight change of the microwave frequency, with no need to reverse the external magnetic field.

First experiments

In 1962, Abragam’s group, including Michel, reported on what was the first experiment to measure the scattering of polarized protons – in this case a 20 MeV beam derived from the cyclotron at Saclay – off a polarized proton target (Abragam et al. 1962). The target was a single crystal of lanthanum magnesium nitrate (La2Mg3(NO3)12.24H2O or LMN), with 0.2% of the La3+ replaced with Ce3+, yielding a proton polarization of 20%.

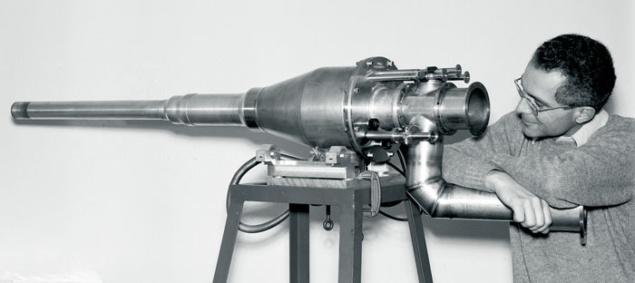

Michel moved to CERN three years later, where he and others from Saclay and CERN had just tested a polarized target in an experiment on proton–proton scattering at 600 MeV at the Synchrocyclotron (SC). Developed by the Saclay group for the higher energy beams of the Proton Synchrotron (PS), the target consisted of a crystal of LMN 4.5 cm long with transverse dimensions 1.2 cm × 1.2 cm and doped with 1% neodymium. It was cooled to around 1 K in a 4He cryostat built in Saclay by Pierre Roubeau, in the field of a 1.8 T magnet designed by CERN’s Guido Petrucci and built in the SC workshop. This target, with an average polarization of around 70%, was used in several experiments at the PS between 1965 and 1968, in both pion and proton beams with momenta of several GeV/c. These experiments measured the polarization parameter for π± elastic scattering and for the charge-exchange reaction π–p→π0n at small values of t, the square of the four-momentum transfer, typically, |t| < 1 GeV2.

In LMN crystals, the fraction of free, polarized protons is only around 1/16 of the total number of target protons. As a consequence, the unpolarized protons bound in the La, Mg, N and O nuclei formed a serious background in these early experiments. This background was reduced by imposing on the final-state particles the strict kinematic constraints expected from the collisions off protons at rest; the residual background was then subtracted by taking special data with a “dummy” target containing no free protons.

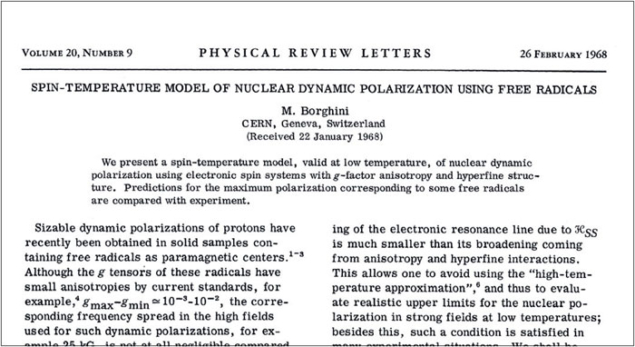

Michel’s group at CERN thus began investigating the possibility of developing polarized targets with a higher content of free protons. In this context, in 1968 Michel published two important papers in which he proposed a new phenomenological model of dynamic nuclear polarization: the “spin-temperature model” (Borghini 1968a and 1968b). The model suggested that sizable proton polarizations could be reached in frozen organic liquids doped with paramagnetic radicals. Despite some initial scepticism, in 1969 Michel’s team succeeded in measuring a polarization of around 40% in a 5 cm3 sample consisting of tiny beads made from a frozen mixture of 95% butanol (C4H9OH) and 5% water saturated with the free-radical porphyrexide. The beads were cooled to 1 K in an external magnetic field of 2.5 T and the fraction of free, polarized protons in the sample was around 1/4 – some four times higher than in LMN (Mango, Runólfsson and Borghini 1969).

The group at CERN went on to study a large number of organic materials doped with free-paramagnetic radicals, searching for the optimum combination for polarized targets. In this activity, where cryostats based on 3He–4He dilution capable of reaching temperatures below 0.1 K were developed, Michel guided two PhD students: Wim de Boer of the University of Delft (now professor at the Karlsruhe Institute of Technology) and Tapio Niinikoski of the University of Helsinki, who went on to join CERN in 1974. They finally obtained polarizations of almost 100% in samples of propanediol (C3H8O2) doped with chromium (V) complexes and cooled to 0.1 K, in a field of 2.5 T, with 19% free, polarized protons.

In this work, the concept of spin temperature that Michel had proposed was verified by polarizing several nuclei simultaneously in a special sample containing 13C and deuterons. The nuclei had different polarizations but their values corresponded to a single spin temperature in the Boltzmann formula giving the populations of the various spin states.

These targets were used in a number of experiments at CERN, at both the PS and the Super Proton Synchrotron (SPS). They measured polarization parameters in the elastic scattering of pions, kaons and protons on protons in the forward diffraction region and at backward scattering angles; in the charge-exchange reactions K–p → K–0n and pp → nn; in the reaction π–p → K0Λ0; and in proton–deuteron scattering. In all of these experiments, Jean-Michel Rieubland of CERN provided invaluable help to ensure a smooth operation of the targets.

In the early 1970s, Michel also initiated the development of “frozen spin” targets. In these targets, the proton spins were first dynamically polarized in a high, uniform magnetic field, and then cooled to a low enough temperature so that the spin-relaxation rate of the protons would be slow even in lower magnetic fields. The targets could then be moved to the detector, thus providing more freedom in the choice of magnetic spectrometers and orientations of the polarization vector. The first frozen spin target was successfully operated at CERN in 1974.

In 1969, Michel took leave from CERN to join the Berkeley group led by Owen Chamberlain working at SLAC, where he took part in a test of T-invariance in inelastic e± scattering from polarized protons in collaboration with the SLAC group led by Richard Taylor. The target, built at Berkeley, was made of butanol and the SLAC 20 GeV spectrometer served as the electron (and positron) analyser. The experiment measured the up–down asymmetry for transverse target spin for both electrons and positrons. No time-reversal violations were seen at the few per cent level.

Michel took leave to work at SLAC again in 1977, this time on a search for parity violation in deep-inelastic scattering of polarized electrons off an unpolarized deuterium target. Here, he worked on the polarized electron source and its associated laser, as well as on the electron spectrometer. The small parity-violation effects expected from the interference of the photon and Z exchanges were, indeed, observed and published in 1978. Michel then moved to the University of Michigan at Ann Arbor, where he joined the group led by Alan Krisch and took part in an experiment to measure proton–proton elastic scattering using both a polarized target and a 6 GeV polarized beam from the 12 GeV Zero Gradient Synchrotron at Argonne National Laboratory.

Michel was an outstanding physicist, equally at ease with theory and being in the laboratory

Michel left CERN’s polarized target group in 1978, succeeded by Niinikoski. Writing in 1985 on major contributions to spin physics, Chamberlain listed the people that he felt to be “the heroes – the people who have given [this] work a special push” (Chamberlain 1985). Michel is the only one that he cites twice: with Abragam and colleagues for the first polarized target and the first experiment to use such a target; and with Niinikoski, for their introduction of the frozen spin target and showing the advantages of powerful (dilution) refrigerators. Today, polarized targets with volumes of several litres and large 3He–4He dilution cryostats are still in operation, for example in the NA58 (COMPASS) experiment at the SPS, where the spin structure of the proton has been studied using deep-inelastic scattering of high-energy muons. Dynamic nuclear polarization has also found applications in medical magnetic-resonance imaging and Michel’s spin-temperature model is still widely used.

In the 1980s, Michel took part in the UA2 experiment at CERN’s SPS proton–antiproton collider, where he contributed to the calibration of the 1444 analogue-to-digital converters (ADCs) that were used to measure the energy deposited in the central calorimeter. He wrote all of the software to drive the large number of precision pulse-generators that monitored the ADC stability during data-taking.

From 1983 to 1996, he was a member of the Executive Committee of the CERN Staff Association, being its vice-president until 1990 and then its president until June 1996. After retiring from CERN in January 1999, he returned to Monaco where in 2003 he was nominated Permanent Representative of Monaco to the United Nations (New York), a post that he kept until 2005.

Michel was an outstanding physicist, equally at ease with theory and being in the laboratory. He had broad professional competences, a sharp, analytical mind, imagination and organizational skills. He is well remembered by his collaborators for his wisdom and advice, and also for his quiet demeanour and his keen but often subtle, sense of humour. His culture and interests extended well beyond science. He was also a talented tennis player. He will be sorely missed by those who had the privilege of working with him, or of being among his friends. Much sympathy goes to his two daughters, Anne and Isabelle, and to their families.