There was a keen sense of anticipation and excitement throughout the ATLAS collaboration as 2012 dawned. The LHC had performed superbly over the previous two years, delivering 5 fb–1 of proton–proton collision data at a centre-of-mass energy of 7 TeV in 2011, thereby allowing ATLAS to embark on a thorough exploration of a new energy regime. This work culminated with the first hints of a potential Higgs-like particle at a mass of about 126 GeV being reported by both the ATLAS and CMS collaborations at the CERN Council meeting in December 2011. With the promise of a much larger data sample at the increased collision energy of 8 TeV in 2012, everyone looked forward to seeing what the new data might bring.

The period leading up to the first collisions in early April 2012 saw intensive activity on the ATLAS detector itself, with the installation of additional sets of chambers to improve the coverage of the muon spectrometer, as well as the regular winter maintenance and consolidation work – essential for making sure that the detector was ready for the long year of data-taking ahead. With the promise of high-luminosity data with up to 40 simultaneous proton–proton collisions (“pile-up”) per bunch crossing – some 2–3 times more than seen in 2011 – experts from the groups responsible for the trigger, offline reconstruction and physics objects worked intensively to ensure that the online and offline software and selections were ready to cope with the influx of data. Careful optimization ensured that the performance of selections for electrons, τ leptons and missing transverse momentum, for example, were made stable against high levels of pile-up, while still keeping within the limits of the computing resources and maintaining – or even exceeding – the efficiencies and purities obtained in the 2011 data.

Meanwhile, the physics-analysis teams worked to finalize their analyses of the 2011 data for presentation at the winter/spring conferences and subsequent publication, while at the same time preparing for analysis of the new data. Members of the Higgs group focused attention on the two high mass-resolution channels H→γγ and H→ZZ(*)→4 leptons (figure 1), where the Higgs signal would appear as a narrow peak above a smoothly varying background. These channels had shown hints in the 2011 data and had the greatest potential to deliver early results in 2012. Using data samples from 2011 and a Monte Carlo simulation of the anticipated new data at 8 TeV, the analyses were re-optimized to maximize sensitivity in the mass region of 120–130 GeV, taking full advantage of the new object-reconstruction algorithms and selections.

The race to Australia

Once data-taking began in early April, the first priority was to calibrate and verify the performance of the detector, trigger and reconstruction, comparing the results with the new 8 TeV Monte Carlo simulation. The modelling of pile-up was particularly important and was checked using a dedicated low-luminosity run of the LHC, where events were recorded with only a single interaction per bunch crossing. Having established the basic conditions for physics analysis, attention then turned to preparations for the International Conference on High-Energy Physics (ICHEP) taking place on 5–11 July in Melbourne, where the particle-physics community and the world’s media would be eagerly awaiting the latest results from the new data.

As ICHEP drew nearer, the LHC began to deliver the goods, with up to 1 fb–1 of data per week

As ICHEP drew nearer, the LHC began to deliver the goods, with up to 1 fb–1 of data per week. Each new run was recorded, calibrated and processed through the Tier-0 centre of the Worldwide LHC Computing Grid at CERN, before being thoroughly checked and validated by the ATLAS data-quality group and delivered to the physics-analysis teams on a regular weekly schedule. At the same time, the worldwide computing Grid resources available to ATLAS worked round the clock to prepare the corresponding Monte Carlo simulation samples at the new collision energy of 8 TeV. At first, the analysers in the Higgs group restricted their attention to control regions in data, aiming to prove to themselves and the rest of the collaboration that the new data were thoroughly understood. After a series of review meetings, with a few weeks remaining before ICHEP, the go-ahead was given to “un-blind” the data taken so far – a moment of great excitement and not a little anxiety.

At first only hints were visible but as more data were added week by week and combined with the results from an improved analysis of the 2011 data, it rapidly became clear that there was a significant signal in both the γγ and 4-lepton channels. The last few weeks before ICHEP were particularly intense, with exhaustive cross-checks of the results and many discussions on exactly how to present and interpret what was being seen. With the full 5.8 fb–1 sample from LHC data-taking up until 18 June included, ATLAS had signals with significances of 4.5σ in the γγ channel and 3.4σ in 4 leptons, leading to the reporting of the observation of a new particle with a combined significance of 5.0σ at the special seminar at CERN on 4 July and at the ICHEP conference.

Similar signals were seen by CMS and both collaborations submitted papers reporting the discovery of this new Higgs-like resonance at the end of July. As well as the γγ and 4-lepton results reported at ICHEP, the paper by ATLAS also included the analysis of the H→WW(*)→lνlν channel, which revealed a broad excess with a significance of 2.8σ around 125 GeV. The combination of these three channels together with the 2011 data analysis from several other channels established the existence of this new particle at the 5.9σ level (figure 2), ushering in a new era in particle physics.

Searching for the unexpected

As well as following up on the hints of the Higgs seen in the 2011 data, the ATLAS collaboration has continued to conduct intensive searches across the full range of physics scenarios beyond the Standard Model, including those that involve supersymmetry (SUSY) and non-SUSY extensions of the Standard Model. More than 20 papers have been published or submitted on SUSY searches with the complete 2011 data set, with a similar number published on other searches beyond the Standard Model. One particular highlight is the search for the dark matter that is postulated to exist from astronomical observations but which has never been seen in the laboratory. By searching for “unbalanced” events, in which a single photon or jet of particles is produced recoiling against a pair of “invisible” undetected particles, limits can be set on the interaction cross-sections of the dark-matter candidates known as weakly interacting massive particles (WIMPs) with ordinary matter. Using the full 2011 data set, ATLAS was able to set limits on such WIMP-nucleon cross-sections for WIMPs of mass up to around 1 TeV; these limits are complementary to those achieved by direct-detection and gamma-ray observation experiments.

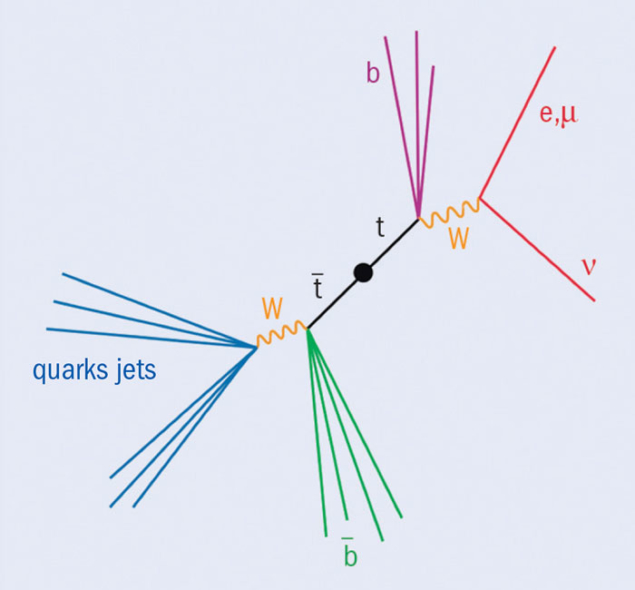

Another highlight is the search for new particles that decay into pairs of top (t) and antitop (t) quarks, giving rise to resonances in the tt– invariant mass spectrum. The complete 2011 data set gives access to invariant masses well beyond 1 TeV, where the t and t tend to decay in “boosted” topologies with two sets of back-to-back collimated decay products. By reconstructing each top decay as a single “fat” jet and exploiting recently developed techniques to search for distinct objects within the “substructure” of these jets, ATLAS was able to set limits on the production of resonances from the decay of Z’ bosons or Kaluza-Klein gluons in the tera-electron-volt range, even though high levels of pile-up added noise to the jet substructure. Such techniques will become even more important in extending these searches to higher masses with the full 2012 data sample.

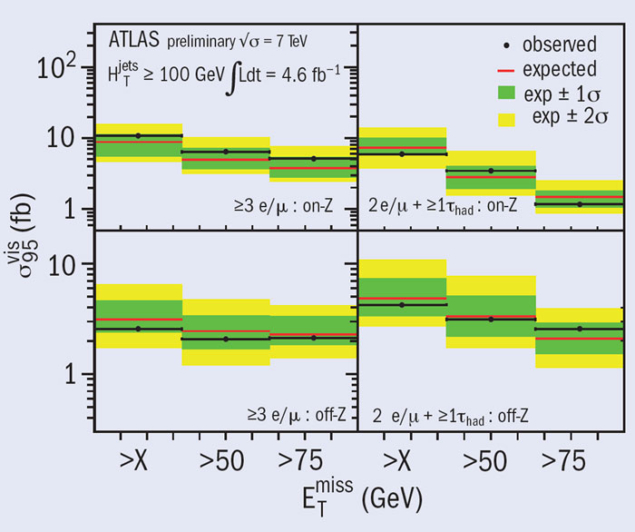

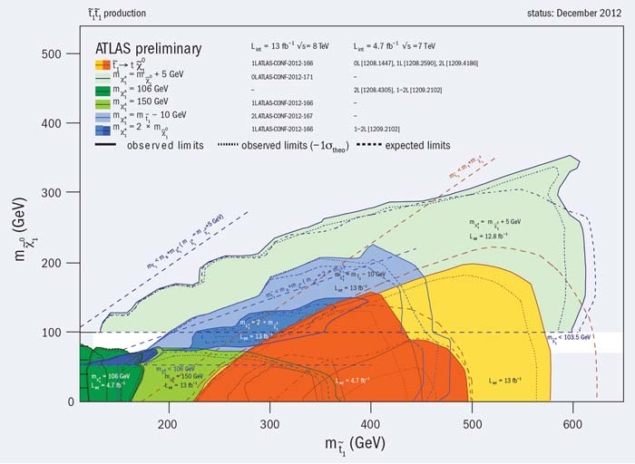

The search for SUSY continued apace in 2012, with new results from 8 TeV data presented at both the SUSY 2012 conference in August and the Hadron Collider Physics Symposium in November. By looking for events with several jets and large missing transverse energy, limits on the strong production of squarks and gluinos were pushed beyond 1.5 TeV for equal-mass squarks and gluinos in the framework of minimal supergravity grand unification (mSUGRA) and the constrained minimal supersymmetric extension of the Standard Model (CMSSM). The lack of evidence for “generic” SUSY signatures with masses close to the electroweak and top-quark mass scales – together with the discovery of a light Higgs-like object around 126 GeV – has led to much theoretical interest in scenarios where only the third generation of SUSY particles (top and bottom squarks, stau lepton) are relatively light. ATLAS performed a series of dedicated searches for the direct production of bottom and top squarks. The latter in particular give rise to final states that are similar to top-pair production, so searches become particularly challenging if the masses of the top squark and quark are similar. Data from 2012 were used to fill much of the “gap” around the mass of the top quark (figure 3).

Precision measurements

The ATLAS search programme described above relies on a thorough understanding of the Standard Model physics-processes that form the background to any search, but are also interesting to study in their own right. Fully exploiting the large statistics of the 2011 and 2012 data samples requires an understanding of the efficiencies, energy scales and resolutions for physics objects such as electrons, muons, τ leptons, jets and b-jets to the level of a few per cent or better, which in turn requires a dedicated effort that continued throughout 2012. This effort paid off in a large number of precise measurements involving the production of combinations of W and Z bosons, photons and jets, including those with heavy flavour. In many cases, these results challenge the current precision of QCD-based Monte Carlo calculations and provide important input for improving the ability to describe physics at LHC energy scales. Studies of high-rate jet production and soft QCD processes have also continued, with measurements of event shapes, energy flow and the underlying event contributing to knowledge of the backgrounds that underlie all physics processes at the LHC. The measurements of WW, WZ, ZZ, Wγ and Zγ production have allowed stringent constraints to be placed on anomalous couplings of these bosons at high energies, in addition to being an essential ingredient in understanding the backgrounds to Higgs searches.

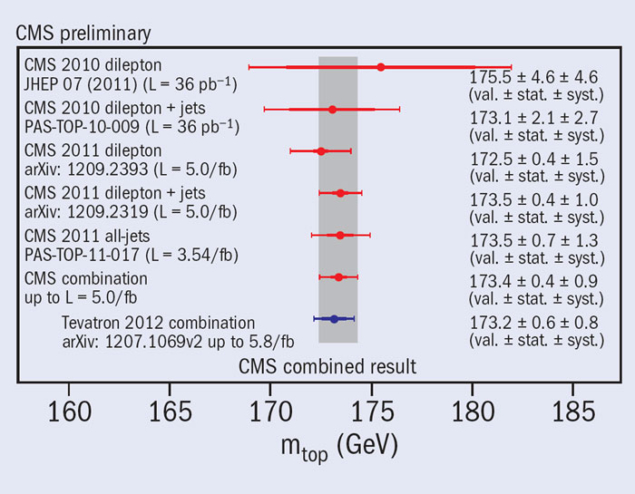

The large top-quark samples available in the data from 2011 and now 2012 have opened up a new era in the study of the heaviest known fundamental particle. The cross-sections for the production of both tt– pairs and single top quarks have been measured precisely at both 7 TeV and 8 TeV; evidence for the associated production of a W boson and a top quark has also been observed. Limits have been set on the associated production of tt pairs together with W and Z particles, and even Higgs bosons, and these studies will be extended with the full 2012 data set. The asymmetry in tt production has also been measured with the full 7 TeV data set – although, unlike at the Tevatron at Fermilab, no hints of anomalies have been seen. The polarizations of top quarks and W bosons produced in their decays have been measured and spin correlations between decaying t and t quarks observed. Furthermore, ATLAS has begun to characterize the top-quark production processes in detail, looking at kinematic distributions and the production of associated jets – key ingredients in increasing the precision of top-quark measurements, as well as in evaluating top-quark backgrounds in searches for physics beyond the Standard Model.

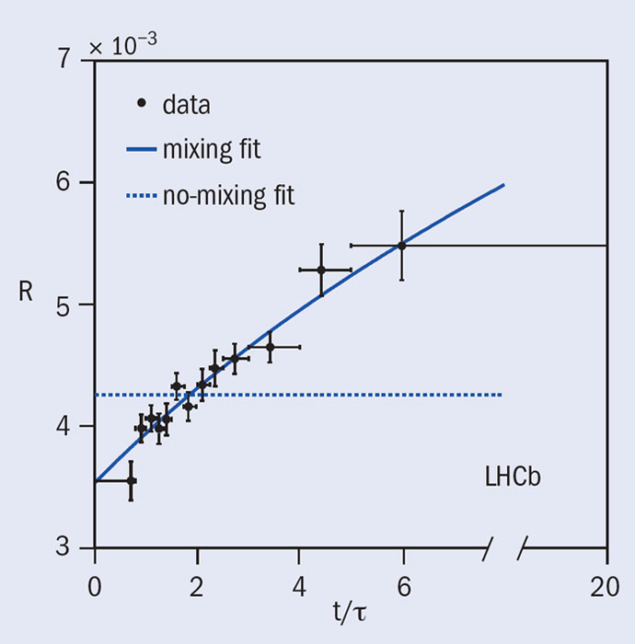

In addition, ATLAS has continued to exploit the large samples of B hadrons produced at the LHC, in particular those from dimuon final states, which can be recorded even at the highest LHC luminosities. Highlights include the detailed study of CP violation in the decay Bs→J/ψφ, which was found to be in perfect agreement with the expectation from the Standard Model, and the precise measurement of the Λb mass and lifetime.

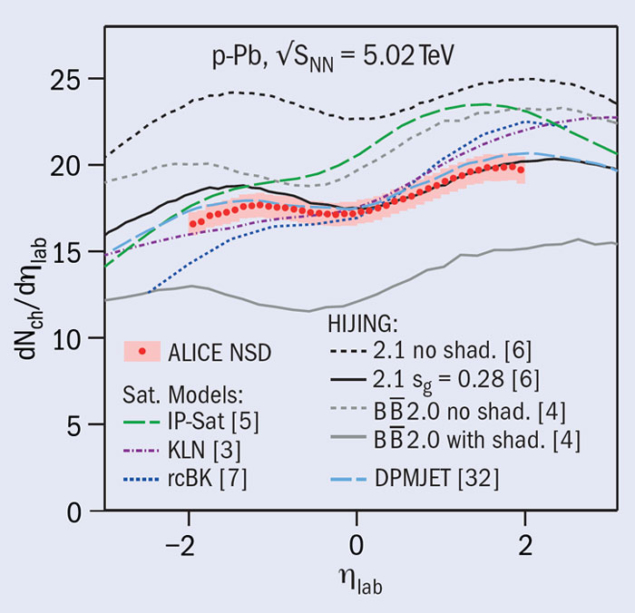

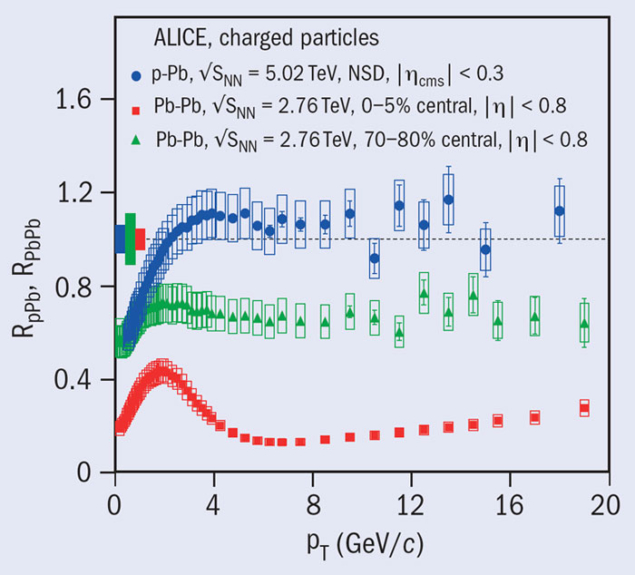

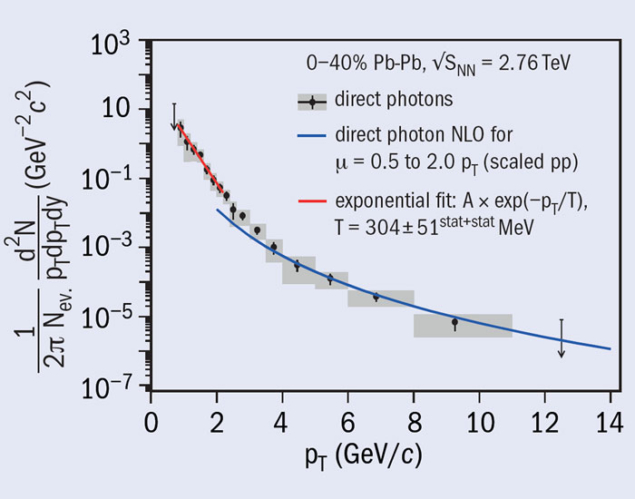

In late 2011, ATLAS recorded around 20 times more lead–lead collisions than in 2010, allowing the studies of the hot, dense medium produced in such collisions to be expanded to include photons and Z bosons, as well as jets. A new technique was developed to subtract the “underlying event” background in lead–lead collisions, enabling precise measurements of jet energies and the identification of electrons and photons in the electromagnetic calorimeter. Bosons emerge from the nuclear collision region “unscathed”, opening the door to using the energy balance in photon-jet and Z-jet events to study the energy loss suffered by jets. In addition, ATLAS has pursued a broad heavy-ion physics programme, which includes the study of correlations and flow, charged-particle multiplicities and suppression, as well as heavy-flavour production. The collaboration looks forward eagerly to the proton–lead physics run scheduled for early 2013.

What is next?

At the time of writing, ATLAS is on track to record more than 20 fb–1 of proton–proton collision data in 2012 and studies of these data by the various teams are in full swing across the whole range of search and measurement analysis. Building on the discovery announced in July, the next task for the Higgs analysis group is to learn more about the new particle, comparing its properties with those expected for the Standard Model Higgs boson and various alternatives. A first step was presented in September, where the July analyses were interpreted in terms of limits on the coupling strength of the new particle to gauge bosons, leptons and quarks, albeit with limited precision at this stage. It is also important to see if the particle decays directly to fermions, by searching for the decays H→ττ and H→bb.

These analyses are extremely challenging because of the high backgrounds and low invariant-mass resolution but first results using 13 fb–1 of 8 TeV data were presented at the Hadron Collider Physics Symposium in November. These results are not yet conclusive; the full 2012 data sample is needed to make any definite statements. At that point, it should also be possible to probe the spin and CP-properties of the new particle and improve the precision on the couplings, bringing the picture of this fascinating new object into sharper focus. At the same time, first results from searches beyond the Standard Model with the complete 2012 data set should be available, further increasing the sensitivity across the full spectrum of new physics models. The analysis of this data set will continue throughout the 2013–2014 shutdown, setting the stage for the start of the 13–14 TeV LHC physics programme in 2015 with an upgraded ATLAS detector.

• This article has only scratched the surface of the ATLAS physics programme in 2012. For more details of the more than 200 papers and 400 preliminary results, please see https://twiki.cern.ch/twiki/bin/view/AtlasPublic.