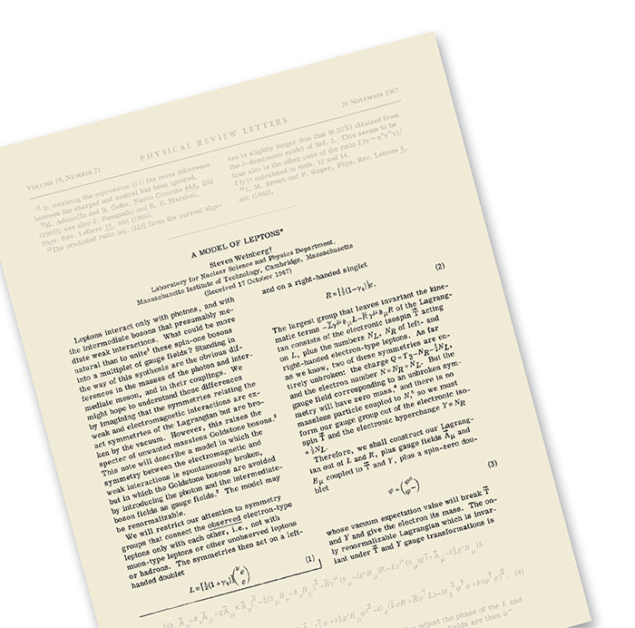

Weinberg’s paper “A Model of Leptons”, published in Physical Review Letters (PRL) on 20 November 1967, determined the direction of high-energy particle physics through the final decades of the 20th century. Just two and a half pages long, it is one of the most highly cited papers in the history of theoretical physics. Its contents are the core of the Standard Model of particles physics, now almost half a century old and still passing every experimental test.

Most particle physicists today have grown up with the Standard Model’s orderly account of the fundamental particles and interactions, but things were very different in the 1960s. Quantum electrodynamics (QED) had been well established as the description of the electromagnetic interaction, but there were no mature theories of the strong and weak nuclear forces. By the 1960s, experimental discoveries showed that the weak force exhibits some common features with QED, in particular that it might be mediated by a vector boson analogous to the photon. Theoretical arguments also suggested that QED’s underlying “U(1)” group structure could be generalised to the larger group SU(2), but there was a serious problem with such a scheme: the W boson suspected to mediate the weak force would have to be very massive empirically, whereas the mathematical symmetry of the theory required it to be massless like the photon.

The importance of symmetries in understanding the fundamental forces was already becoming clear at the time, in particular how nature might hide its symmetries. Could “hidden symmetry” lead to a massive W boson while preserving the mathematical consistency of the theory? It was arguably Weinberg’s developments, in 1967, that brought this concept to life.

Strong inspiration

Weinberg’s inspiration was an earlier idea of Nambu in which fermions – such as the proton or neutron – can behave like a left- or right-handed screw as they move. If mass is ignored, these two “chiral” states act independently and the theory leads to the existence of a particle with properties similar to those of the pion – specifically a pseudoscalar, which means that it has no spin and its wavefunction changes sign under mirror symmetry. Nambu’s original investigations, however, had not examined how the three versions of the pion, with positive, negative or zero charge, shared their common “pion-ness” when interacting with one another. This commonality, or symmetry, is mathematically expressed by the group SU(2), which had been known in nuclear physics since the 1930s and in mathematics for much longer.

It was this symmetry that Weinberg used as his point of departure in building a theory of the strong force, where nucleons interact with pions of all charges and the proton and neutron themselves form two “faces” of the underlying SU(2) structure. Empirical observations of the interactions between pions and nucleons showed that the underlying symmetry of SU(2) tended to act on the left- or right-handed chiral possibilities independently. The mathematical structure of the resulting equations to describe this behaviour, as Weinberg discovered, is called SU(2)×SU(2).

However, in nature this symmetry is not perfect because nucleons have mass. Had they been massless, they would have travelled at the speed of light, the left- and right-handed possibilities acting truly independently of one another and the symmetry left intact. That nucleons have a mass, so that the left and right states get mixed up when perceived by observers in different inertial frames, breaks the chiral symmetry. Nambu had investigated this effect as far back as 1959, but without the added richness of the SU(2)×SU(2) mathematical structure that Weinberg brought to the problem. Weinberg had been investigating this more sophisticated theory in around 1965, initially with considerable success. He derived theorems that explained the observed interactions of pions and nucleons at low energies, such as in nuclear physics. He was able to predict how pions behaved when they scattered from one another and, with a few well-defined assumptions, paved the way for a whole theory of hadronic physics at low energies.

Meanwhile, in 1964, Brout and Englert, Higgs, Kibble, Guralnik and Hagen had demonstrated that the vector bosons of a Yang–Mills theory (one that is like QED but where attributes such as electric charge can be exchanged by the vector bosons themselves) put forward a decade earlier could become massive without spoiling the fundamental gauge symmetry. This “mass-generating mechanism” suggested that a complete Yang–Mills theory of the strong interaction might be possible. In addition to the well-known pion, examples of massive vector particles that feel the strong force had already been found, notably the rho-meson. Like the pion, this too occurs in three charged varieties: positive, negative and zero. Superficially these rho-mesons had the hallmarks of being the gauge bosons of the strong interactions, but they also have mass. Was the strong interaction the theatre for applying the mass-generating mechanism?

Despite at first seeming so promising, the idea failed to fit the data. For some phenomena, the SU(2)×SU(2) symmetry empirically is broken, but for others where spin didn’t matter it works perfectly. When these patterns were incorporated into the maths, the rho-meson stubbornly remained massless, contrary to reality.

Epiphany on the road

In the middle of September 1967, while driving his red Camaro to work at MIT, Weinberg realised that he had been applying the right ideas to the wrong problem. Instead of the strong interactions, for which the SU(2)×SU(2) idea refused to work, the massless photon and the hypothetical massive W boson of the electromagnetic and weak interactions fitted perfectly with this picture. To call this possibility “hypothetical” hardly does justice to the time: the W boson was not discovered until 1984, and in 1967 was so disregarded as to receive at best a passing mention, if any, in textbooks.

Weinberg needed a concrete model to illustrate his general idea. The numerous strongly interacting hadrons that had been discovered in the 1950s and 1960s were, for him, a quagmire, so he restricted his attention to the electron and neutrino. Here too it is worth recalling the state of knowledge at the time. The constituent quark model with three flavours – up, down and strange – had been formulated in 1964, but was widely disregarded. The experiments at SLAC that would help establish these constituents were a year away from announcing their results, and Bjorken’s ideas of a quark model, articulated at conferences that summer, were not yet widely accepted either. Finally, with only three flavours of quark, Weinberg’s ideas would lead to empirically unwanted “strangeness-changing neutral currents”. All these problems would eventually be solved, but in 1967 Weinberg made a wise choice to focus on leptons and leave quarks well alone.

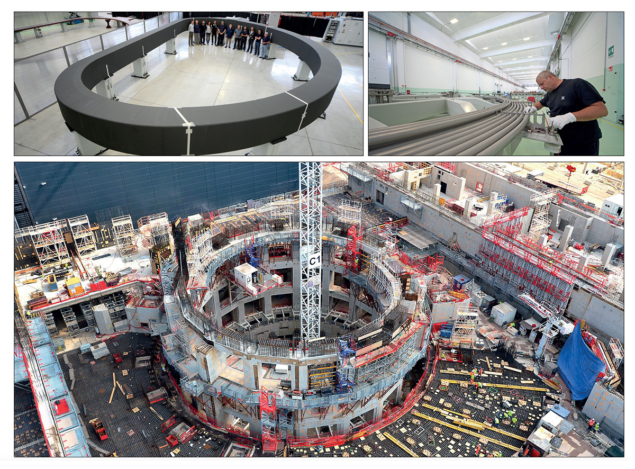

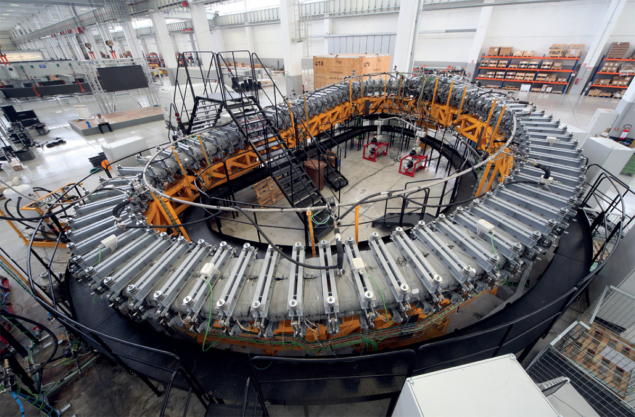

Image credits: CERN.

Following the discovery of parity violation in the 1950s, it was clear that the electron can spin like a left- or right-handed screw, whereas the massless neutrino is only left-handed. The left–right symmetry, which had been a feature of the strong interaction, was gone. Instead of two SU(2), the mathematics now only needed one, the second being replaced by the unitary group U(1). So Weinberg set up the equations of SU(2)×U(1) – the same structure that, unknown to him, had been proposed by Sheldon Glashow in 1961 and by Abdus Salam and John Ward in 1964 in attempts to marry the electromagnetic and weak interactions. His theory, like theirs, required two massive electrically charged bosons – the W+ and W– carriers of the weak force – and two neutral bosons: the massless photon and a massive Z0. If correct, it would show that the electromagnetic and weak forces are unified, taking physics a step closer to the goal of a single theory of all fundamental interactions.

“The history of attempts to unify weak and electromagnetic interactions is very long, and will not be reviewed here.” So began the first footnote in Steven Weinberg’s seminal November 1967 paper, which led to him being awarded the 1979 Nobel Prize in Physics with Salam and Glashow. Weinberg’s footnote mentioned Fermi’s primitive idea for unification in 1934, and also the model that Glashow proposed in 1961.

Clarity of thought

Weinberg started his paper by articulating the challenge of unifying the electroweak forces as both an opportunity and a threat. He focused on the leptons – those fermions, such as the electron and neutrino, which do not feel the strong force. “Leptons interact only with photons, and with the [weak] bosons that presumably mediate weak interactions. What could be more natural than to unite these spin-one bosons [the photon and the weak bosons] into a multiplet,” he pondered. That was the opportunity. The threat was that “standing in the way of this synthesis are the obvious differences in the masses of the photon and [weak] boson.”

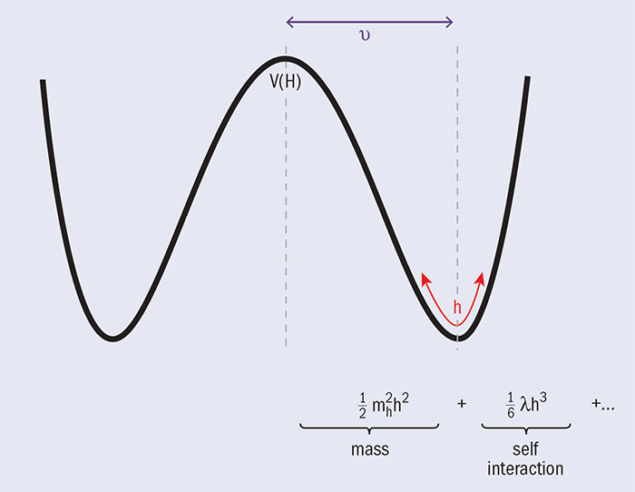

Weinberg then suggests a solution: perhaps “the symmetries relating the weak and electromagnetic interactions are exact [at a fundamental level] but are [hidden in practice]”. He then draws attention to the ideas of Higgs, Brout, Englert, Guralnik, Hagen and Kibble, and uses these to give masses to the W and Z in his model. In a further important insight, Weinberg shows how this symmetry-breaking mechanism leaves the photon massless.

His opening paragraph ended with the prescient observation that: “The model may be renormalisable.” The argument upon which this remark is based appears at the very end of the paper, although with somewhat less confidence than the promise hinted at the beginning. He begins the final paragraph with a question: “Is this model renormalisable?” The extent of his intuition is revealed in his argument: although the presence of a massive vector boson hitherto had been a scourge, the theory with which he had begun had no such mass and, as such, was “probably renormalisable”. So, he pondered: “The question is whether this renormalisablity is lost [by the spontaneous breaking of the symmetry].” And the conclusion: “If this model is renormalisable, what happens when we extend it…to the hadrons?”

By speculating that his model may be renormalisable, Weinberg was hugely prescient, as ’t Hooft and Veltman would prove four years later. And perhaps it was a chance encounter at the Solvay Congress in Belgium two weeks before his paper was submitted that helped convince Weinberg that he was on the right track.

Solvay secrets

By the end of September 1967, Weinberg had his ideas in place as he set off to Belgium to attend the 14th Solvay Congress on Fundamental Problems in Elementary Particle Physics, held in Brussels from 2 to 7 October. He did not speak about his forthcoming paper, but did make some remarks after other talks, in particular following a presentation by Hans Peter Durr about a theorem of Jeffrey Goldstone and spontaneous symmetry breaking. During a general discussion session following Durr’s talk, Weinberg mused: “This raises a question I can’t answer: are such models renormalisable?” He continued with a similar argument to that which later appeared in his paper, ending with: “I hope someone will be able to find out whether or not [this] is a renormalisable theory of weak and electromagnetic interactions.”

There was remarkably little reaction to Weinberg’s remarks, and he himself has recalled “a general lack of interest”. The only recorded statement came from François Englert, who insisted that the theory is renormalisable; then, remarkably, there is no further discussion. Englert and Robert Brout, then relatively junior scientists, had both attended the same Brussels meeting.

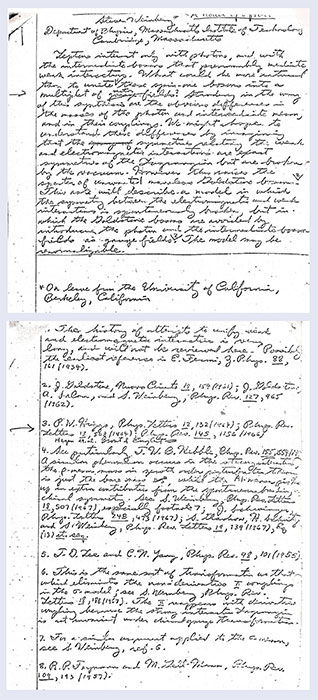

Image credit: Private collection.

At some point during the Solvay conference, Weinberg presented a hand-written draft of his paper to Durr, and 40 years later I obtained a copy by a roundabout route. Weinberg himself had not seen it in all that time, and thought that all record of his Nobel-winning manuscript had been lost. The original manuscript is notable for there being no sign of second thoughts, or editing, which suggests that it was a provisional final draft of an idea that had been worked through in the preceding days. The only hint of modification after the first draft had been written is a memo squeezed in at the end of a reference to Higgs, to include references to Brout and Englert, and to Guralnik, Hagen and Kibble, for the idea of spontaneous symmetry breaking, on which the paper was based. Weinberg’s intuition about the renormalisability of the model is already present in this manuscript, and is identical to what appears in his PRL paper. There is no mention of Glashow’s SU(2)×U(1) model in the draft, but this is included in the version that was published in PRL the following month. This is the only substantial difference. This manuscript was submitted to the editors of PRL on Weinberg’s return to the US, and received by them on 17 October. It appeared in print on 20 November.

Lasting impact

Weinberg’s genius was to assemble together the various pieces of a jigsaw and display the whole picture. The basic idea of mass generation was due to the assorted theorists mentioned above, in the summer of 1964. However, a crucial feature of Weinberg’s model was the trick of being able to give masses to the W and Z while leaving the photon massless. This extension of the mass-generating mechanism was due to Tom Kibble, in 1967, which Weinberg recognises and credits.

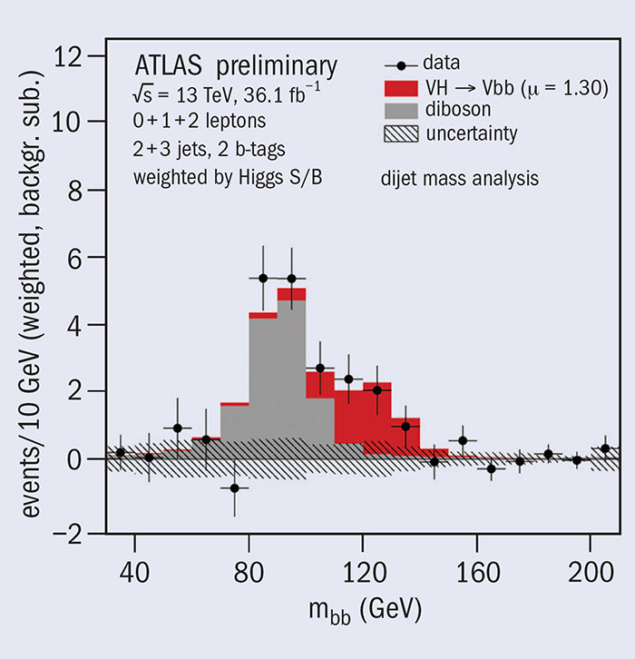

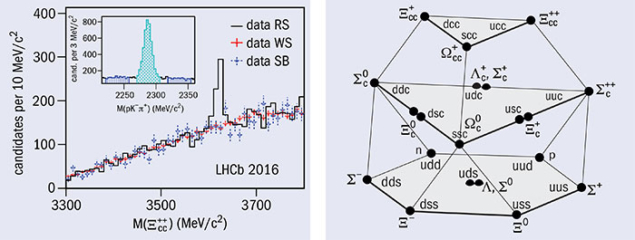

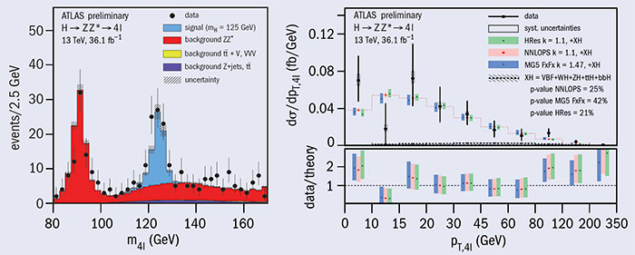

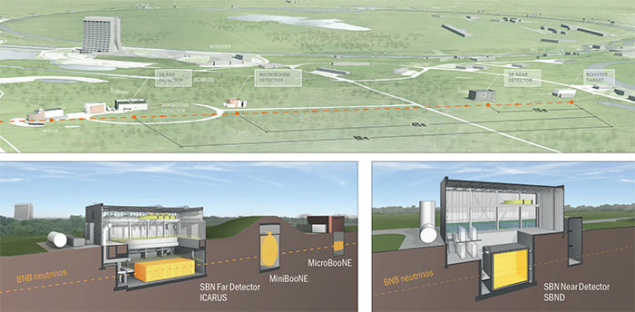

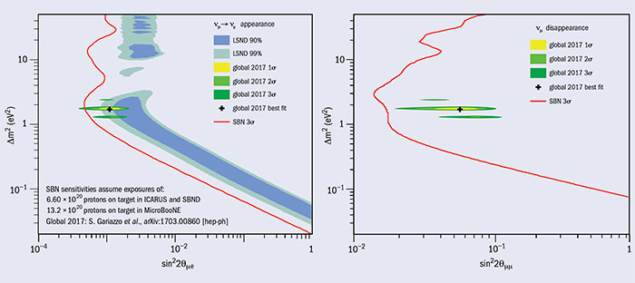

As was the case with his comments in Brussels the previous month, Weinberg’s paper appeared in November 1967 to a deafening silence. “Rarely has so great an accomplishment been so widely ignored,” wrote Sidney Coleman in Science in 1979. Today, Weinberg’s paper has been cited more than 10,000 times. Having been cited but twice in the four years from 1967 to 1971, suddenly it became so important that researchers have cited it three times every week throughout half a century. There is no parallel for this in the history of particle physics. The reason is that in 1971 an event took place that has defined the direction of the field ever since: Gerard ’t Hooft made his debut, and he and Martinus Veltman demonstrated the renormalisability of spontaneously broken Yang–Mills theories. A decade later the W and Z bosons were discovered by experiments at CERN’s Super Proton Synchrotron. A further 30 years were to pass before the discovery of the Higgs boson at the Large Hadron Collider completed the electroweak menu. And in the meantime, completing the Standard Model, quantum chromodynamics was established as the theory of the strong interactions, based on the group SU(3).

This episode in particle physics is not only one of the seminal breakthroughs in our understanding of the physical world, but touches on the profound link between mathematics and nature. On one hand it shows how it is easier to be Beethoven or Shakespeare than to be Steven Weinberg: change a few notes in a symphony or a phrase in a play, and you can still have a wonderful work of art; change a few symbols in Weinberg’s equations and the edifice falls apart – for if nature does not read your creation, however beautiful it might be, its use for science is diminished. Like all great theorists, Weinberg revealed a new aspect of reality by writing symbols on a sheet of paper and manipulating them according to the logic of mathematics. It took decades of technological progress to enable the discoveries of W and Higgs bosons and other entities that were already “known” to mathematics 50 years ago.

• This article draws on material from Frank Close’s history of the path to discovery of the Higgs boson: The Infinity Puzzle (Oxford University Press).

Symmetries, groups and massive insight led to electroweak unification

Weinberg’s 1967 achievement is rooted in the notation of group theory, which is the mathematical language describing the symmetries of a system, and built upon many earlier successes including that of quantum electrodynamics (QED). QED is perhaps the simplest example of a general class of “non-abelian gauge theories”. Since the all-important electric charge in QED is a single number, it can be described mathematically in terms of the first unitary group, U(1). In the 1950s Yang and Mills constructed generalisations of QED in which the U(1) number was replaced by matrices, such as in the groups SU(2) or SU(3). The weak force exhibited tantalising hints that a SU(2) generalisation of QED might be involved, but there was a serious problem: a “W boson” – the analogue of QED’s photon – would have to be very massive empirically, whereas the mathematical symmetry of the theory required it to be massless – like the photon. The only way to give the W and Z particles mass yet leave the photon massless was if nature contained “hidden symmetries” that were somehow broken.

In 1961 Goldstone discovered a theorem suggesting, inter alia, that a theory of the weak force involving hidden symmetry is impossible. However, in 1963, condensed-matter theorist Philip Anderson pointed out that superconductivity manages to evade Goldstone’s theorem, and demonstrated this mathematically in a theory without relativity. The following year several theorists, including Peter Higgs, generalised Anderson’s insights to include relativity. Among the implications were that a theory involving fermions with no mass – with so-called chiral symmetry – could hide this property in empirically consistent ways when particles become massive; that there should be a massive boson without spin (the Higgs boson); and that the W boson could also gain mass while preserving the underlying mathematical symmetry of the theory. It was Weinberg’s 1967 paper that brought all of these pieces together, and today we know that nature follows this path, with the weak and electromagnetic interactions described by a single SU(2)×U(1) structure. At the time, however, the breakthrough was hardly noticed.