Half a century ago, Edwin McMillan and Glenn Seaborg of Berkeley were awarded the 1951 Nobel Prize for Chemistry for their elucidation in the early 1940s of the first ‘transuranic’ nuclei – synthetic radioactive nuclei heavier than uranium, which conventionally marks the end of the Periodic Table of nuclei. Since then, patient work has discovered a series of highly unstable superheavy nuclei, but a fundamental nuclear prediction said that an “island of stability” would eventually be reached.

The article “First postcard from the island of nuclear stability” reported the first results obtained at the Joint Institute for Nuclear Research (JINR), Dubna, on the synthesis of superheavy nuclei in fusion reaction induced by a calcium-48 beam. Targets of plutonium isotopes with mass numbers 242 and 244 furnished new nuclides, notably with 114 protons, and their subsequent alpha decays, terminated by spontaneous fission.

The conclusion was that in these reactions the even-odd isotopes of element 114 had been produced following the emission from an intermediate compound nucleus of three neutrons, together with gamma rays. The formation cross-sections were very small (~1 pb). The radioactive properties of these nuclides (energies and half-lives) demonstrated the existence of a new region of nuclear stability, which had been predicted earlier as due to nuclear shell effects.

The experiments were performed in the Flerov Laboratory of Nuclear Reactions (JINR) in collaboration with the Lawrence Livermore National Laboratory (LLNL), GSI (Darmstadt), RIKEN (Saitama), the Comenius University (Bratislava) and the University of Messina (Italy) (Oganessian et al. 1999a and 1999b).

During work carried out in June to November 1999, a plutonium-244 target was bombarded by a calcium-48 beam (total beam dose about 1019 ions). Two more identical decay chains were observed (Oganessian et al. 2000a and 2000b). Each consisted of two sequential alpha decays that were terminated by spontaneous fission characterized by a large energy release in the detectors (figure 1a).

Following the trail

The new alpha-decay energies are slightly higher than in the previous case and the total decay time is shorter (about 0.5 min). The probability that the observed decays are due to random coincidences is less than 5 x 10-13.

Both events were observed at a beam energy that corresponded to a compound-nucleus excitation energy of 36-37 MeV. Here, the most probable de-excitation channel of the hot nucleus 292/114 corresponds to the emission of four neutrons together with gamma rays. Taking this into account, the new decay chains had to be attributed to the decay of the neighbouring even-even isotope of element 114 with mass 288.

To check this conclusion, further experiments continued with a curium-248 target (Oganessian et al. 2001). In Dimitrovgrad, Russia, 10 mg of the highly enriched isotope was produced. More such target material was provided by LLNL.

Changing the target from plutonium-244 to curium-248, while maintaining all other experimental conditions, makes the fusion reaction lead to the formation in the four-neutron evaporation channel of a new heavier nucleus, this time with 116 protons and mass 292. Its probable alpha decay leads to a daughter nucleus, the isotope 288/114 previously synthesized in the calcium-48/plutonium-244 reaction via the four-neutron evaporation channel. Thus, after the decay of the 292/116 nucleus, the whole decay chain of the daughter 288/114 nucleus should be observed (figure 1a).

The experimental conditions

In the original experiment, the recoil atoms were separated in flight from the beam particles and the products of incomplete fusion reactions analysed by the Dubna gas-filled recoil separator (DGFRS).

Separated heavy atoms were implanted into a 4 x 12 cm2 detector located in the focal plane 4 m from the target. The front detector was surrounded by side detectors in such a way that the entire array resembled a “box” with an open front. This increased the detection efficiency of alpha particles from the decay of an implanted nucleus to 87% of the total solid angle.

For each atom implanted in the sensitive layer of the detector, the velocity and energy of the recoil were measured, as well as the location on the detector area. If the nucleus of the implanted atom emitted an alpha particle or fission fragments, the latter were detected in a strict correlation with the implant on the position sensitive surface of the detector.

Usually the experiments are performed using a continuous beam. However, for the synthesis of element 116, these conditions were changed. After implantation in the front detector of a heavy nucleus with the expected parameters and the subsequent emission of an alpha particle with energy above 10 MeV (the two signals are strictly correlated in position), the accelerator was switched off and the subsequent decays took place without the beam.

The measurements performed immediately after turning off the accelerator beam showed that the counting rate of alpha particles (energy above 9.0 MeV) and fission fragments from spontaneous fission in a 0.8 mm position window, defined by the position resolution of the detector, amounts to 0.45 per year and 0.2 per year respectively. Random coincidences imitating a three-step 1-3 min decay chain of the nucleus 288/114 are practically impossible, even for a single event.

Decay chains

In such conditions at a beam dose of 2.3 x 1019 ions, three decay chains of element 116 were registered (figure 1b). After the emission of the first alpha particle (energy 10.53 ± 0.06 MeV), the sequential decay was recorded with the beam turned off.

As can be seen from figure 1b, all decays are strictly correlated; the five signals in the front detectors – the recoil nucleus, three alpha particles and fission fragments – deviate by no more than 0.6 mm.

The alpha-particle energies and the half-lives of the nuclei in the three decay chains, the first alpha decays and those detected after the accelerator was switched off are all consistent between each other within the limits of the detector energy resolution (60 keV) and the statistical fluctuations in the decay time of the events.

All of the detected decays following the first 10.53 MeV alpha particle agree well with the decay chains of 288/114 observed in the earlier reaction (see figure 1a). Thus it is reasonable to assign the observed decay to the nuclide 292/116, produced via the evaporation of four neutrons in the complete fusion reaction using curium-248.

The energy spectrum of the three events corresponding to the nucleus 292/116, and the five events corresponding to the alpha decay of the daughter nuclei 288/114 and 284/112, as well as the summed energy of the fragments from five events of spontaneous fission of the nucleus 280/110, obtained in the experiments with the plutonium and curium targets, are shown in figure 2.

Well defined decay energy

As expected for even-even nuclei, the experimentally observed alpha decay is characterized by a well defined decay energy, corresponding to the mass difference between the mother and daughter nuclei. The time distribution of the signals in the decay chains follows an exponential decay law. The half-lives for each nucleus are also shown in figure 2.

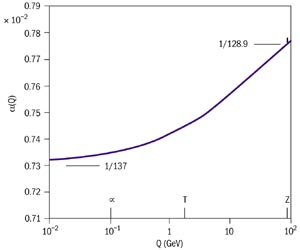

For allowed alpha transitions (even-even nuclei) the decay energy and probability (half-life) are connected by the well known Geiger-Nuttall relation. This is strictly fulfilled for all currently known 60 nuclei heavier than lead 208 for which data are available. Figure 3 shows the calculated and experimental data for nuclei with more than 100 protons and the data from the present experiment on the synthesis of nuclei with 112, 114 and 116 protons.

Owing to the high precision of the alpha-particle energy measurements (resolution 0.5%), any other interpretation of the atomic numbers of the observed decays would have been in strong contradiction with the general characteristics of alpha decay.

Finally, in the spontaneous fission of 280/110, the fission fragment energy measured in the detectors amounted to 206 MeV. This corresponds to a mean fission fragment kinetic energy of 230 MeV (taking into account the energy loss in the dead layer of the detector), which is characteristic of the fission of a rather heavy nucleus. In the thermal-neutron fission of uranium, the corresponding energy is 168 MeV.

Increased stability

Comparing spontaneous fission and alpha-decay half-lives for nuclei with 110 and 112 protons with earlier data for the lighter isotopes of these elements shows a significant increase in the stability of heavy nuclei with increasing neutron number. The addition of 10 neutrons to the 270/110 nucleus makes the half-life a hundred thousand times as long. The isotopes of element 112 with masses 277 and 284 exhibit a comparable effect.

Comparing the experimental alpha-decay energy values with those calculated in different models shows that the difference between experiment and theory is in the range ±0.5 MeV. Without going into any detailed analysis, the conclusion can be drawn that theoretical models developed during the last 35 years and predicting the decisive influence of nuclear structure on the stability of superheavy elements are well founded, not only qualitatively but also, to a certain extent, quantitatively.

This increased stability significantly extends research in the region of superheavy elements, opening up the study of such areas as their chemical properties and the measurement of their atomic masses. The development of the experimental techniques will make it possible to advance into the region of even heavier nuclei – expect more postcards in the years to come!

The experiments were carried out in collaboration with the Analytical and Nuclear Chemistry Division of the Lawrence Livermore National Laboratory.

*Evidence for the superheavy nucleus 118, reported by Berkeley scientists in 1999 has been retracted.