Our understanding of nature’s fundamental constituents owes much to particle colliders. Notable discoveries include the W and Z bosons at CERN’s Super Proton Synchrotron in the 1980s, the top quark at Fermilab’s Tevatron collider in the 1990s and the Higgs boson at CERN’s LHC in 2012. While colliding particles at ever higher energies is still one of the best ways to search for new phenomena, experiments at lower energies can also address fundamental-physics questions.

The Physics Beyond Colliders kick-off workshop, which was held at CERN on 6–7 September, brought together a wide range of physicists from the theory, experiment and accelerator communities to explore the full range of research opportunities presented by the CERN complex. The considered timescale for such activities reaches as far as 2040, corresponding roughly to the operational lifetime of the LHC and its high-luminosity upgrade. The study group has been charged with pulling together interested parties and exploring the options in appropriate depth, with the aim of providing input to the next update to the European Strategy for Particle Physics towards the end of the decade.

As the name of the workshop and study group suggests, a lot of interesting physics can be tested in experiments that are complementary to colliders. Ideas discussed at the September event ranged from searching for particles with masses far below an eV up to more than 1015 eV, to prospects for dark matter and even dark-energy studies.

Theoretical motivation

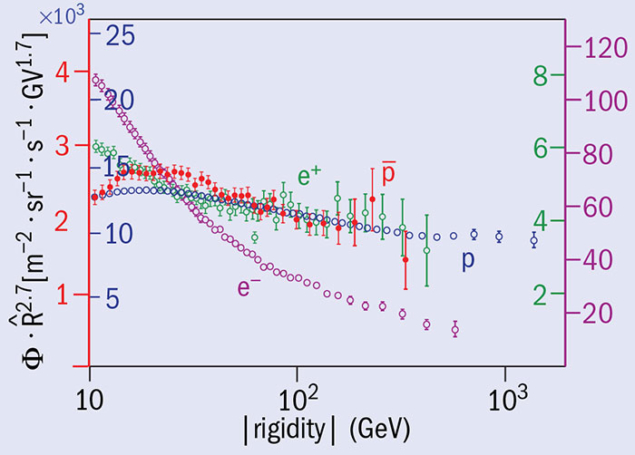

Searches for electric and magnetic dipole moments in elementary particles are a rich experimental playground, and the enormous precision of such experiments allows a wide range of new physics to be tested. The long-standing deviation of the muon magnetic moment (g-2) from the Standard Model prediction could indicate the presence of relatively heavy supersymmetric particles, but also the presence of relatively light “dark photons”, which are also a possible messenger to the dark-matter sector. A confirmation, or not, of the original g-2 measurement and experimental tests of other models will provide important input to this issue.

Electric dipole moments are inherently linked to the violation of charge–parity (CP) symmetry, which is a necessary ingredient to explain the origin of the baryon asymmetry of the universe. While CP violation has been observed in weak interactions, it is notably absent in strong interactions. For example, no electric dipole moment of the neutron has been observed so far. Eliminating this so-called strong-CP problem gives significant motivation for hypothesising the existence of a new elementary particle called the axion. Indeed, axion-like particles are not only natural dark-matter candidates but they turn out to be one of the features that are abundant in well-motivated extensions of the Standard Model, such as string theory. Axions could help to explain a number of astrophysical puzzles such as dark matter. They may also be connected to inflation in the very early universe and to the generation of neutrino masses, and potentially are even involved with the hierarchy problem.

Neutrinos are also the source of a large range of puzzles, but also opportunities. Interestingly, essentially all current experiments and observations – including that of dark matter – can be explained by a very minimal extension of the Standard Model: the addition of three right-handed neutrinos. In fact, theorists’ ideas range far beyond that, motivating the existence of whole sectors of weakly coupled particles below the Fermi scale.

Ambitions may even lead to tackling one of the most challenging of questions: dark energy. While the effective couplings between ordinary matter and dark energy must be quite small, there is still significant room for observable effects in low-energy experiments, for example using atom interferometry.

Experimental opportunities

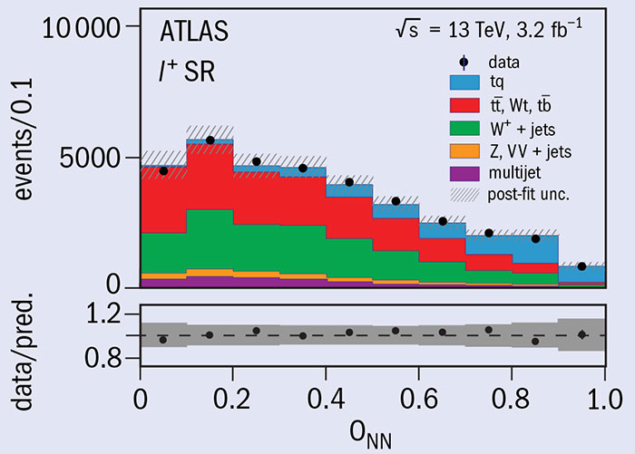

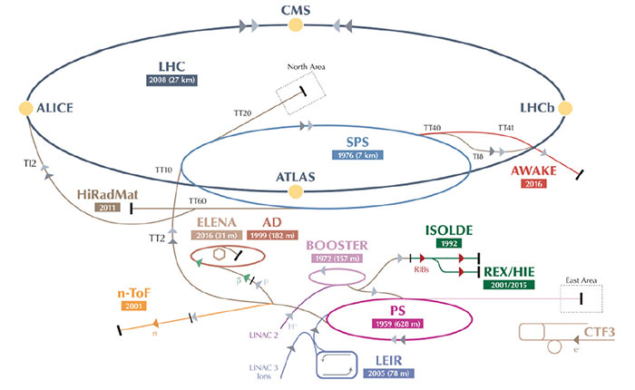

It is clear that CERN’s priority over the coming years is the full exploitation of the LHC – first in its present guise and then, from 2026, as the High-Luminosity LHC (HL-LHC). The HL-LHC places stringent demands on intensity and related characteristics, and a major upgrade of the LHC injectors is planned during Long Shutdown 2 (LS2) beginning in 2019 to provide beams in the HL-LHC era. Despite this, the LHC doesn’t actually use many protons. This leaves the other facilities at CERN open to exploit the considerable beam-production capabilities of the accelerator complex.

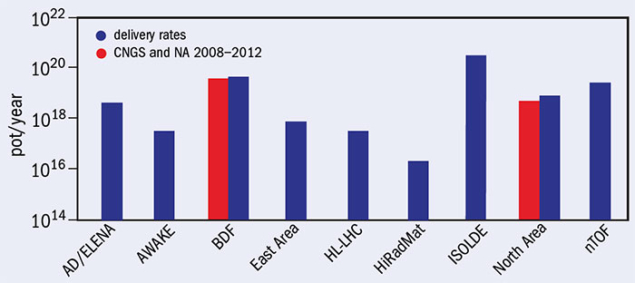

CERN already has a diverse and far-sighted experimental programme based on the LHC injectors. This spans the ISOLDE radioactive beam facility, the neutron time-of-flight facility (nTOF), the Antiproton Decelerator (AD), the High-Radiation to Materials (HiRadMat) facility, the plasma-wakefield experiment AWAKE, and the North and East experimental areas. CERN’s proton-production capabilities are already heavily used and will continue to be well-solicited in the coming years. A preliminary forecast shows that there is potential capacity to support one more major SPS experiment after the injector upgrade.

The AD is a classic example of CERN’s existing non-collider-based facilities. This unique antimatter factory has several experiments studying the properties of antiprotons and anti-hydrogen atoms in detail. Here, in the experimental domain, the time constant for technological evolution is much shorter than it is for large high-energy detectors. The AD is currently being upgraded with the ELENA ring, which will increase by two orders of magnitude the trapping efficiency of anti-hydrogen atoms and will allow different experiments to operate in parallel. After LS2, ELENA will serve all AD experiments and will secure CERN’s antimatter research into the next decade. The ISOLDE and nTOF facilities also offer opportunities to investigate fundamental questions such as the unitarity of the quark-mixing matrix, parity violation or the masses of the neutrinos.

The three main experiments of the North Area – NA61, COMPASS and NA62 – have well-defined programmes until the time of LS2 and all have longer term plans. After completion of its search for a QCD critical point, NA61 plans to further study QCD deconfinement with emphasis on charm signals. It will also remain a unique facility to constrain hadron production in primary proton targets for future neutrino beams in the US and Japan. The Common Muon and Proton Apparatus for Structure and Spectroscopy (COMPASS) experiment, meanwhile, intends to further study the hadron structure and spectroscopy with RF-separated beams of higher intensity in order to study fundamental physics linked to quantum chromodynamics.

Image credit: Giovanni Rumolo.

An independent proposal submitted to the workshop involved using muon beams from the SPS to make precision measurements of μ–e elastic scattering, which could reduce by a factor of two the present theoretical hadronic uncertainty on g-2 for future precision experiments. Once NA62 reaches its intended precision on its measurement of the rare decay K+ → π+νν, the collaboration plans comprehensive measurements in the K sector in addition to one year of operation in beam-dump mode to search for heavy neutral leptons such as massive right-handed neutrinos. In the longer term, NA62 aims to study the rare decay K0 → π0νν, which would require a similar but expanded apparatus and a high-intensity K0 beam. In general, rare decays might reveal deviations from the Standard Model that indicate the presence of new heavy particles that alter the decay rate.

Fixed ambitions

The September workshop heard proposals for new ambitious fixed-target facilities that would complement existing experiments at CERN. A completely new development at CERN’s North Area is the proposed SPS beam-dump facility (BDF). Beam dump in this context implies a target that absorbs all incident protons and contains most of the cascade generated by the primary-beam interaction. The aim is for a general-purpose fixed-target facility, which in the initial phase will facilitate a general search for weakly interacting “hidden” particles. The Search for HIdden Particles (SHiP) experiment plans to exploit the unique high-energy, high-intensity features of the SPS beam to perform a comprehensive investigation of the dark sector in the few-GeV mass range (CERN Courier March 2016 p25). A complementary approach, based on observing missing energy in the products of high-energy interactions, is currently being explored by NA64 on an electron test beam, and the experiment team has proposed to extend its programme to muon and hadron beams in the future.

From an accelerator perspective, the BDF is a challenging undertaking and will involve the development of a new extraction line and a sophisticated target and target complex with due regard to radiation-protection issues. More generally, the foreseen North Area programme requires high intensity and slow extraction from the SPS, and this poses some serious accelerator challenges. A closer look at these reveals the need for a concerted programme of studies and improvements to minimise extraction beam loss and associated activation of hardware with its attendant risks.

Fixed-target experiments with LHC beams could be carried out using either crystal extraction or an internal gas jet, and initially these might operate in parasitic mode upstream from existing detectors (LHCb or ALICE). Combined with the high LHC beam energy, an internal gas target would open up a new kinematic range to hadron and heavy-ion measurements, while beam extraction using crystals was proposed to measure the magnetic moments of short-lived baryons.

New facilities to complement fixed-target experiments are also under consideration. A small all-electric storage ring would provide a precision measurement of the proton electric dipole moment (EDM) and could test for new physics at the 100 TeV scale, while a mixed electric/magnetic ring would extend such measurements to the deuteron EDM. The physics motivation for these facilities is strong, and from an accelerator standpoint such storage rings are an interesting challenge in their own right (CERN Courier September 2016 p27).

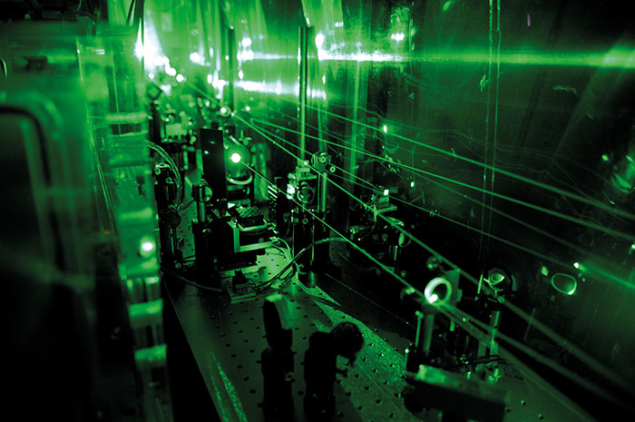

A dedicated gamma factory is another exciting option being explored. Partially stripped ions interacting with photons from a laser have the potential to provide a powerful source of gamma rays. Driven by the LHC, such a facility would increase by seven orders of magnitude the intensity currently achievable in electron-driven gamma-ray beams. The proposed nuSTORM project, meanwhile, would provide well-defined neutrino beams for precise measurements of the neutrino cross-sections and represent an intermediate step towards a neutrino factory or a muon collider.

Last but not least, there are several non-accelerator projects that stand to benefit from CERN’s technological expertise and infrastructure, in line with the existing CAST and OSQAR experiments. CAST (CERN Axion Solar Telescope) uses one of the LHC dipole magnets to search for axions produced in the Sun, while OSQAR attempts to produce axions in the laboratory. Researchers working on IAXO, the next-generation axion helioscope foreseen as a significantly more powerful successor to CAST, have expressed great interest in co-operating with CERN on the design and running of the experiment’s large toroidal magnet. The high-field magnets developed at CERN would also increase the reach of future axion searches in the laboratory as a follow-up of OSQAR at CERN or ALPS at DESY. DARKSIDE, a flagship dark-matter search to be sited in Gran Sasso, also has technological synergies with CERN in the cryogenics, liquid-argon and silicon-photomultiplier domains.

Next steps

Working groups are now being set up to assess the physics case of the proposed projects in a global context, and also their feasibility and possible implementation at CERN or elsewhere. A follow-up Physics Beyond Colliders workshop is foreseen in 2017, and the final deliverable is due towards the end of 2018. It will consist of a summary document that will help the European Strategy update group to define its orientations for non-collider fundamental-particle-physics research in the next decade.