Image credit: D Nölle/DESY.

The European X-ray Free Electron Laser (European XFEL) now entering operations at Hamburg in Germany will generate ultrashort X-ray flashes at a rate of 27,000 per second with a peak brilliance one billion times higher than the best conventional X-ray sources. The outstanding characteristics of the facility will open up completely new research opportunities for scientists and industrial users (see see “Europe enters the extreme X-ray era”). Involving close co-operation with nearby DESY and other organisations worldwide, the European XFEL is a joint effort between many countries. No fewer than 17 European institutes contributed to the accelerator complex, with the largest in-kind (> 70%) and other contributions coming from DESY.

The story of the European XFEL is a wonderful example of R&D synergy between the high-energy physics and light-source worlds. At the heart of the European XFEL are superconducting radio-frequency (SRF) cavities that allow the 1.4 km-long linac to accelerate electrons highly efficiently. Despite the clear benefits of using SRF cavities, before the mid-1990s the technology was not mature enough and too expensive to be practical for a large facility. Experience gained at DESY and other major accelerator facilities – including LEP at CERN and CEBAF at Jefferson Lab – changed that picture. It became clear that superconducting accelerating structures with reasonably large gradients can produce high-energy electron beams in long continuous linac sections.

Enter TESLA

A major character in the European XFEL story is the TESLA (TeV Energy Superconducting Linear Accelerator) collaboration, which was founded in 1990 by key players of the SRF community. Among its challenges was to make SRF cavities more affordable. DESY offered to host essential infrastructure and a test facility to operate newly designed accelerator modules housing eight standardised cavities. The first module was built in the mid-1990s in collaboration with many of the later contributors to the European XFEL, and the first electron beam was accelerated in 1997.

Image credit: D Nölle/DESY.

The enormous flexibility in how electron bunches can be structured has meant that there has long been a close connection between free-electron lasers and superconducting accelerator technology from the beginning: examples can be found at Stanford University, Darmstadt University and Dresden Rossendorf, Jefferson Lab, and DESY. From the start of the TESLA R&D, it was envisaged that SRF technology would drive a superconducting linear collider operating at a centre-of-mass energy of 500 GeV, with the possibility of extending this to 800 GeV. This facility would have had two linear accelerators pointing towards one another: one for electrons, which would also be used to drive an X-ray laser facility, and one for positrons. At the time, high-energy physicists were weighing up other linear-collider designs in the US and Japan, but TESLA was unique in its choice of superconducting accelerating cavities. In 1997, DESY and the TESLA collaboration published a Conceptual Design Report for a superconducting linear collider with an integrated X-ray laser facility.

Although DESY was preparing for a hard-X-ray FEL, first the goal was to build an intermediate facility operating at slightly lower X-ray energies (corresponding to an output in the VUV region). In 2005 the VUV-FEL at DESY (today known as FLASH) produced laser light at a wavelength of 30 nm based on the principle of self-amplified-spontaneous-emission (SASE), which allows the generation of coherent X-ray light. The project preparation phase for the European XFEL began in 2007, with the official start declared in 2009 after the foundation of the European XFEL company. Plans to build a linear collider at DESY were dropped, but in 2004 the TESLA design was chosen for a new International Linear Collider (ILC). This machine is now “shovel ready” and the Japanese government has expressed interest in hosting it, although a final decision is awaited. Since the European XFEL uses TESLA technology at a large scale, the now finished superconducting linac can be considered as a prototype for the linear collider. Moreover, the successful technology transfer with industry that underpinned the construction of the European XFEL serves as a model for a worldwide linear collider effort.

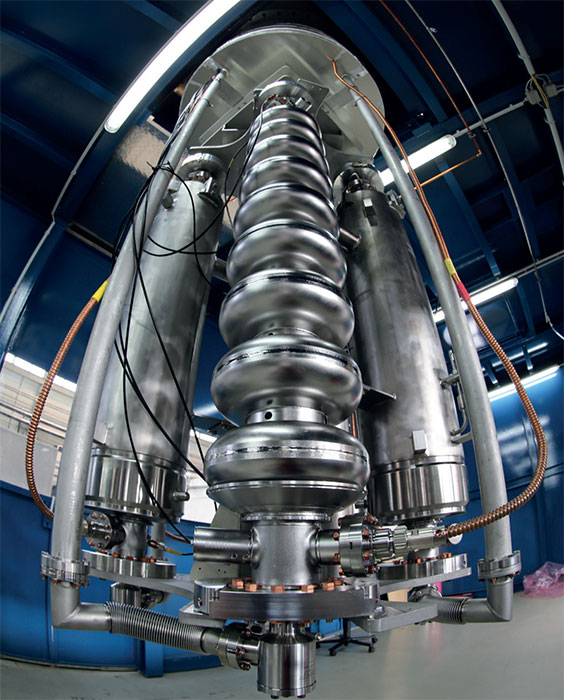

The European XFEL, measuring 3.4 km in length, begins with the injector, which comprises a normal-conducting RF electron gun with a high bunch charge and low emittance. This is followed by a standard superconducting eight-cavity XFEL accelerator module, which takes the electron bunch to an energy of around 130 MeV. A harmonic 3.9 GHz accelerator module (provided by INFN and DESY) further alters the longitudinal beam profile, while a laser heater provided by Uppsala University increases the uncorrelated energy spread. At the end of the injector, 600 μs-long electron-bunch trains of typically 500 pC bunches are available for acceleration.

Image credit: R Frommann.

Once in the main linac of the European XFEL, the electron beam is accelerated in three sections. The first consists of four superconducting XFEL modules and presents a fairly modest gradient (far below the XFEL design gradient of 23.6 MV/m). The second linac section consists of 12 accelerator modules, from which the beam emerges with a relative energy spread of 0.3% at 2.4 GeV. The third and last linac section consists of 80 accelerator modules with an installed length of just less than 1 km. Bunch-compressor sections between the three main linac sections include dipole-magnet chicanes, further focusing elements and beam diagnostics.

Taking into account all installed main-linac accelerator modules, the achievable electron beam energy of the European XFEL is above its design energy of 17.5 GeV, although the exact figure will depend on optimising the RF control. The complete linac is suspended from the ceiling to keep the tunnel floor free for transport and the installation of electronics. During accelerator operation the electrons are distributed via fast kicker magnets into one of the two electron beamlines that feed several photon beamlines. Here, undulators provide X-ray photon beams for various experiments (see “Europe enters the extreme X-ray era”).

Meeting the production challenge

The superconducting accelerator modules for the European XFEL linac were contributed by DESY, CEA Saclay and LAL Orsay in France, INFN Milano in Italy, IPJ Swierk and Soltan Institute in Poland, CIEMAT in Spain and BINP in Russia. More than 100 modules were needed, and although they were based on a prototype developed for the TESLA linear collider, they had to be modified for large-scale industrial production. DESY, which had responsibility for the construction and operation of the particle accelerator, developed a consortium scheme in which collaborators could contribute in-kind, either by producing sub-components or by assuming responsibility for module assembly or component testing. A sophisticated supply chain was established and the pioneering work at FLASH provided invaluable help in dealing with initial challenges.

A standard accelerator module contains eight superconducting cavities, each supplied by one RF power coupler, and a superconducting quadrupole package, which includes correction coils and a beam-position monitor. Each module also contains cold vacuum components such as bellows and valves, and frequency tuners. During the R&D and project preparation phases, less than one accelerator module per year was assembled, thus it took a factor 30 increase in production rate to build the European XFEL. Two European companies – Research Instruments in Germany and Zanon in Italy – shared the task of producing 800 superconducting cavities from solid niobium. Cavity string and module assembly took place at CEA Saclay/Irfu based on completely new infrastructure called the XFEL village. Assembly was directly impacted by the availability of all accelerator module sub-components, and any break in the supply chain was seen as a risk for the overall project schedule. In the end, a total of 96 successfully tested XFEL modules were made available for tunnel installation within a period of just two years.

Image credit: D Nölle/DESY.

The operation of the superconducting accelerator modules also requires extensive dedicated infrastructure. DESY provided the RF high-power system and developed the required 10 MW multi-beam klystrons with industrial partners. A total of 27 klystrons, each supplying RF power for 32 superconducting structures (four accelerator modules), were ordered from two vendors. Precision regulation of the RF fields inside the accelerating cavities, which is essential to provide a highly reproducible and stable electron beam, is achieved by a powerful control system developed at DESY. BINP Novosibirsk produced and delivered major cryogenic equipment for the linac, while the cryogenic plant itself (an in-kind contribution from DESY) guarantees pressure variations will stay below 1%. The largest visible contributions to the warm beamline sections are the more than 700 beam-transport magnets and the 3 km vacuum system in the different sections. While most of the magnets were delivered by the Efremov Institute in St Petersburg, a small fraction was built by BINP Novosibirsk and completed at Stockholm University. Many metres of beamline, be it simple straight chambers or the more sophisticated flat bunch-compressor chambers, were also fabricated by BINP Novosibirsk.

State-of-the-art electron-beam diagnostics is vital for the success of the European XFEL. Thus 64 screens and 12 wire scanner stations, 460 beam-position monitors of eight different types, 36 toroids and six dark-current monitors are distributed along the accelerator. Longitudinal bunch properties are measured by bunch-compression monitors, beam-arrival monitors, electro-optical devices and transverse deflecting systems. Major contributions to the electron-beam diagnostics came from DESY, PSI in Switzerland, CEA Saclay in France, and from INR Moscow in Russia.

Technology goes full circle

Commissioning for the European XFEL accelerator began in December 2016 with the cool-down of the complete cryogenic system. First beam was injected into the main linac in January 2017, and by March bunches with a sufficient beam quality to allow lasing were accelerated to 12 GeV and stopped in a beam dump. After passing this beam through the “SASE1” undulator, first lasing at a wavelength of 0.9 nm was observed on 2 May. Further improvements to the beam quality and alignment led to lasing at 0.2 nm on 24 May. More than 90% of the installed accelerator modules are now in RF operation, with effective accelerating gradients reaching the expected performance in fully commissioned stations.

The first hard-X-ray SASE free-electron laser, the Linac Coherent Light Source (LCLS) at SLAC in the US, was based on a normal-conducting accelerator. Upgrades to LCLS-II now aim for continuous wave operation using 280 superconducting cavities of essentially the same design as those of the European XFEL. Improvements to the superconducting technology were made to further reduce the cryogenic load of the accelerator structures. New techniques such as nitrogen doping and infusion, developed by Fermilab and other LCLS-II partners, are also essential, and established procedures and expertise with series production will benefit future FEL user operation. The now existing European SRF expertise and collaboration scheme also sketches out a mechanism for a European in-kind contribution to a Japan-hosted ILC.

The European XFEL is one of the largest accelerator-based research facilities in the world, and is driven by the longest and most advanced superconducting linac ever constructed. This was possible thanks to the great collaborative effort and team spirit of all partners involved in this project over the past 20 years or more.