The demanding and creative environment of fundamental science is a fertile breeding ground for new technologies, especially unexpected ones. Many significant technological advances, from X-rays to nuclear magnetic resonance and the Web, were not themselves a direct objective of the underlying research, and particle accelerators exemplify this dynamic transfer from the fundamental to the practical. From isotope separation, X-ray radiotherapy and, more recently, hadron therapy, there are now many categories of accelerators dedicated to diverse user communities across the sciences, academia and industry. These include synchrotron light sources, X-ray free-electron lasers (XFELs) and neutron spallation sources, and enable research that often has direct societal and economic implications.

During the past decade or so, high-gradient linear accelerator technology developed for fundamental exploration has matured to the point where it is being transferred to applications beyond high-energy physics. Specifically, the unique requirements for the Compact Linear Collider (CLIC) project at CERN have led to a new high-gradient “X-band” accelerator technology that is attracting the interest of light-source and medical communities, and which would have been difficult for those communities to advance themselves due to their diverse nature.

Set to operate until the mid-2030s, the Large Hadron Collider (LHC) collides protons at an energy of 13 TeV. One possible path forward for particle physics in the post-LHC, “beyond the Standard Model”, era is a high-energy linear electron–positron collider. CLIC envisions an initial-energy 380 GeV centre-of-mass facility focused on precision measurements of the Higgs boson and the top quark, which are promising targets to search for deviations from the Standard Model (CERN Courier November 2016 p20). The machine could then, guided by the results from the LHC and the initial-stage linear collider, be lengthened to reach energies up to 3 TeV for detailed studies of this high energy regime. CLIC is overseen by the Linear Collider Collaboration along with the International Linear Collider (ILC), a lower energy electron–positron machine envisaged to operate initially at 250 GeV (CERN Courier January/February 2018 p7).

The accelerator technology required by CLIC has been under development for around 30 years and the project’s current goals are to provide a robust and detailed design for the update of the European Strategy for Particle Physics, with a technical design report by 2026 if resources permit. One of the main challenges in making CLIC’s 380 GeV initial energy stage cost effective, while guaranteeing its reach to 3 TeV, is generating very high accelerating gradients. The gradient needed for the high-energy stage of CLIC is 100 MV/m, which equates to 30 km of active acceleration. For this reason, the CLIC project has made a major investment in developing high-gradient radio-frequency (RF) technology that is feasible, reliable and cheap.

Evading obstacles

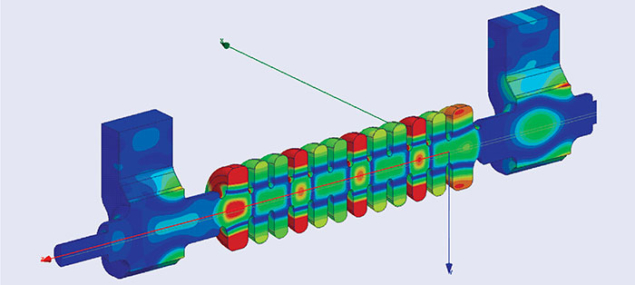

Maximising the accelerating gradient leads to a shorter linac and thus a less expensive facility. But there are two main limiting factors: the increasing need of peak RF power and the limitation of accelerating-structure surfaces to support increasingly strong electromagnetic fields. Circumventing these obstacles has been the focus of CLIC activities for several years.

One way to mitigate the increasing demand for peak power is to use higher frequency accelerating structures (figure 1), since the power needed for fixed-beam energy goes up linearly with gradient but goes down approximately with the inverse square root of the RF frequency. The latest XFELs SACLA in Japan and SwissFEL in Switzerland operate at “C-band” frequencies of 5.7 GHz, which enables a gradient of around 30 MV/m and a peak power requirement of around 12 MW/m in the case of SwissFEL. This increase in frequency required a significant technological investment, but CLIC’s demand for 3 TeV energies and high beam current requires a peak power per metre of 200 MW/m! This challenge has been under study since the late 1980s, with CLIC first focusing on 30 GHz structures and the Next Linear Collider/Joint Linear Collider community developing 11.4 GHz “X-band” technology. The twists and turns of these projects are many, but the NLC/JLC project ceased in 2005 and CLIC shifted to X-band technology in 2007. CLIC also generates high peak power using a two-beam scheme in which RF power is locally produced by transferring energy from a low-energy, high-current beam to a high-energy, low-current beam. In contrast to the ILC, CLIC adopts normal-conducting RF technology to go beyond the approximately 50 MV/m theoretical limit of existing superconducting cavity geometries.

The second main challenge when generating high gradients is more fundamental than the practical peak-power requirements. A number of phenomena come to life when the metal surfaces of accelerating structures are subject to very high electromagnetic fields, the most prominent being vacuum arcing or breakdown, which induces kicks to the beam that result in a loss of luminosity. A CLIC accelerating structure operating at 100 MV/m will have surface electric fields in excess of 200 MV/m, sometimes leading to the formation of a highly conductive plasma directly above the surface of the metal. Significant progress has been made in understanding how to maximise gradient despite this effect, and a key insight has been the identification of the role of local power flow. Pulsed surface heating is another troubling high-field phenomenon faced by CLIC, where ohmic losses associated with surface currents result in fatigue damage to the outer cavity wall and reduced performance. Understanding these phenomena has been essential to guide the development of an effective design and technology methodology for achieving gradients in excess of 100 MV/m.

Test-stand physics

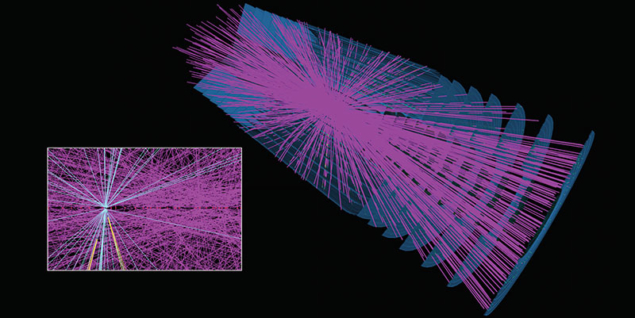

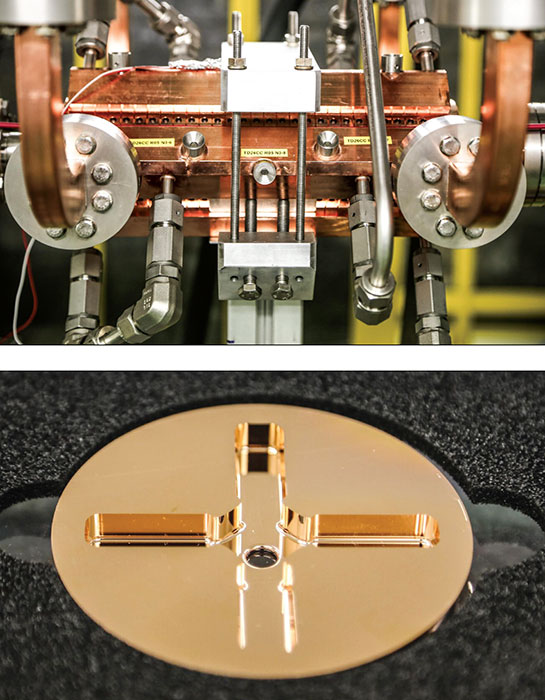

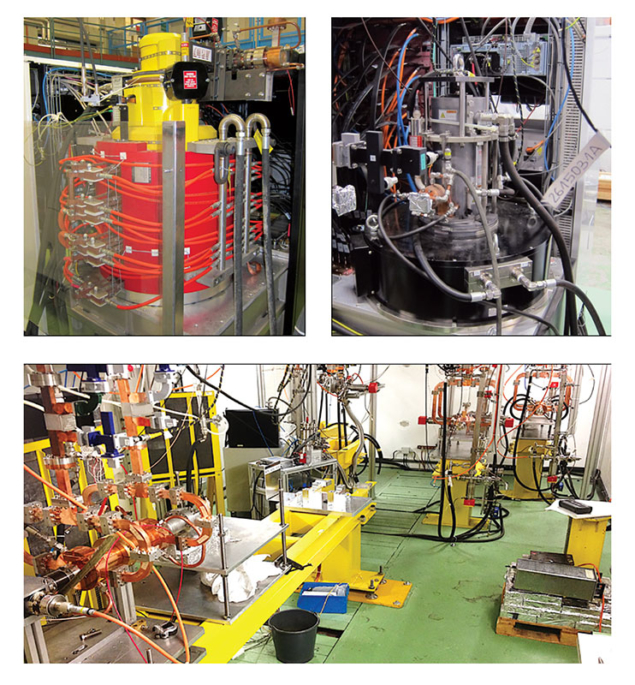

Critical to CLIC’s development of high-gradient X-band technology has been an investment in four test stands, which allowed investigations of the complex, multi-physics effects that affect high-power behaviour in operational structures (figure 2). The test stands provided the RF klystron power, dedicated instrumentation and diagnostics to operate, measure and optimise prototype RF components. In addition, to investigate beam-related effects, one of the stands was fed by a beam of electrons from the former “CTF3” facility. This has since been replaced by the CLEAR test facility, at which experiments will come on line again next year (CERN Courier November 2017 p8).

While the initial motivation for the CLIC test stands was to test prototype components, high-gradient accelerating structures and high-power waveguides, the stands are themselves prototype RF units for linacs – the basic repeatable unit that contains all the equipment necessary to accelerate the beam. A full linac, of course, needs many other subsystems such as focusing magnets and beam monitors, but the existence of four operating units that can be easily visited at CERN has made high-gradient and X-band technology serious options for a number of linac applications in the broader accelerator community. An X-band test stand at KEK has also been operational for many years and the group there has built and tested many CLIC prototype structures.

With CLIC’s primary objective being to provide practical technology for a particle-physics facility in the multi-TeV range, it is rather astonishing that an application requiring a mere 45 MeV beam finds itself benefiting from the same technology. This small-scale project, called Smart*Light, is developing a compact X-ray source for a wide range of applications including cultural heritage, metallurgy, geology and medical, providing a practical local alternative to a beamline at a large synchrotron light source. Led by the University of Eindhoven in the Netherlands, Smart*Light produces monochromatic X-rays via inverse Compton scattering, in which X-rays are produced by “bouncing” a laser pulse off an electron beam. The project teams aims to make the equipment small and inexpensive enough to be able to integrate it in a museum or university setting, and is addressing this objective with a 50 MV/m-range linac powered by one of the two standard CLIC test-stand configurations (a 6 MW Toshiba klystron). Funding has been awarded to construct the first prototype system and, once operational, Smart*Light will pursue commercial production.

Another Compton-source application is the TTX facility at Tsinghua University in China, which is based on a 45 MeV beam. The Tsinghua group plans to increase the energy of the X-rays by upgrading the energy of their electron linac, which must be done by increasing the accelerating gradient because the facility is housed in an existing radiation-shielded building. The energy increase will occur in two steps: the first will raise the accelerating gradient by upgrading parts of the existing S-band 3 GHz RF system, and the second will be to replace sections with an X-band system to increase the gradient up to 70 MV/m. The Tsinghua X-band power source will also implement a novel “corrector cavity” system to flatten the power compressed pulse that is also now part of the 380 GeV CLIC baseline design. Tsinghua has successfully tested a standard CLIC structure to more than 100 MV/m at KEK, demonstrating that high-gradient technology can be transferred, and has taken delivery of a 50 MW X-band klystron for use in a test stand.

Perhaps the most significant X-band application is XFELs, which produce intense and short X-ray bursts by passing a very low-emittance electron beam through an undulator magnet. The electron linac represents a substantial fraction of the total facility cost and the number of XFELs is presently quite limited. Demand for facilities also exceeds the available beam time. Operational facilities include LCWS at SLAC, FERMI at Trieste and SACLA at Riken, while the European XFEL in Germany, the Pohang Light Source in Korea and SwissFEL are being commissioned (CERN Courier July/August 2017 p18), and it is expected that further facilities will be built in the coming years.

XFEL applications

CLIC technology, both the high-frequency and high-gradient aspects, has the potential to significantly reduce the cost of such X-ray facilities, allowing them to be funded at the regional and possibly even university scale. In combination with other recent advances in injectors and undulators, the European Union project CompactLight has recently received a design study grant to examine the benefits of CLIC technology and to prepare a complete technical design report for a small-scale facility (CERN Courier December 2017 p8).

A similar type of electron linac, in the 0.5–1 GeV range, is being proposed by Frascati Laboratory in Italy for XFEL development, in addition to the study of advanced plasma-acceleration techniques. To fit the accelerator in a building on the Frascati campus, the group has decided to use a high-gradient X-band for their linac and has joined forces with CLIC to develop it. The cooperation includes Frascati staff visiting CERN to help run the high-gradient test facilities and the construction of their own test stand at Frascati, which is an important advance in testing its capability to use CLIC technology.

In addition to providing a high-performance technology for acceleration, high-gradient X-band technology is the basis for two important devices that manipulate the beam in low-emittance and short-bunch electron linacs, as used in XFELs and advanced development linacs. The first is the energy-spread lineariser, which uses a harmonic of the accelerating frequency to correct the energy spread along the bunch and enable shorter bunches. A few years ago a collaboration between Trieste, PSI and CERN made a joint order for the first European X-band frequency (11.994 GHz) 50 MW klystrons from SLAC, and jointly designed and built the lineariser structures, which have significantly improved the performance of the Elettra light source in Trieste and become an essential element of SwissFEL.

Following the CLIC test stand and lineariser developments, a new commercial X-band klystron has become available, this time at the lower power of 6 MW and supplied by Canon (formerly Toshiba). This new klystron is ideally suited for lineariser systems and one has recently been constructed at the soft X-ray XFEL at SINAP in Shanghai, which has a long-standing collaboration with CLIC on high-gradient and X-band technology. Back in Europe, Daresbury Laboratory has decided to invest in a lineariser system to provide the exceptional control of the electron bunch characteristics needed for its XFEL programme, which is being developed at its CLARA test facility. Daresbury has been working with CLIC to define the system, and is now procuring an RF power system based on the 6 MW Toshiba klystron and pulse compressor. This will certainly be a major step in the ease of adoption of X-band technology.

The second major high-gradient X-band beam manipulation application is the RF deflector, which is used at the end of an XFEL to measure the bunch characteristics as a function of position along the bunch. High-gradient X-band technology is well suited to this application and there is now widespread interest to implement such systems. Teams at FLASH2, FLASH-Forward and SINBAD at DESY, SwissFEL and CLIC are collaborating to define common hardware, including a variable polarisation deflector to allow a full 6D characterisation of the electron bunch. SINAP is also active in this domain. The facility is awaiting delivery of three 50 MW CPI klystrons to power the deflectors and will build a standard CLIC test structure for tests at CERN in addition to a prototype X-band XFEL structure in the context of CompactLight.

The rich exchange between different projects in the high-gradient community is typified by PSI and in particular the SwissFEL. Many essential features of the SwissFEL have a linear-collider heritage, such as the micron-precision diamond machining of the accelerating structures, and SwissFEL is now returning the favour. For example, a pair of CLIC X-band test accelerating structures are being tested at CERN to examine the high-gradient potential of PSI’s fabrication technology, showing excellent results: both structures can operate at more than 115 MV/m and demonstrate potential cost savings for CLIC. In addition, the SwissFEL structures have been successfully manufactured to micron precision in a large production series – a level of tolerance that has always been an important concern for CLIC. Now that the PSI fabrication technology is established, the laboratory is building high-gradient structures for other projects such as Elettra, which wishes to increase its X-ray energy and flux but has performance limitations with its 3 GHz linac.

Beyond light sources

High-gradient technology is now working its way beyond electron linacs, particularly in the treatment of cancer. The most common accelerator-based cancer treatment is X-rays, but protons and heavy ions offer many potential advantages. One drawback of hadron therapy is the high cost of the accelerators, which are currently circular. A new generation of linacs offer the potential for smaller, lower cost facilities with additional flexibility.

The TERA foundation has studied such linac-based solutions and a firm called ADAM is now commercialising a version with a view to building a compact hadron-therapy centre (CERN Courier January/February 2018 p25). To demonstrate the potential of high gradients in this domain, members of CLIC received support from the CERN knowledge transfer fund to adapt CLIC technology to accelerate protons in the relevant energy range, and the first of two structures is now under test. The predicted gradient above was 50 MV/m, but the structure has exceeded 55 MV/m and also behaves consistently when compared to the almost 20 CLIC structures. We now know that it is possible to reach high accelerating gradients even for protons, and projects based on compact linacs can now move forward with confidence.

Collaboration has driven the wider adoption of CLIC’s high-gradient technology. A key event took place in 2005 when CERN management gave CLIC a clear directive that, with LHC construction limiting available resources, the study must find outside collaborators. This was achieved thanks to a strong effort by CLIC researchers, also accompanied by a great deal of activity in electron linacs in the accelerator community.

We should not forget that the wider adoption of X-band and high-gradient technology is extremely important for CLIC itself. First, it enlarges the commercial base, driving costs down and reliability up, and making firms more likely to invest. Another benefit is the improved understanding of the technology and its operability by accelerator experts, with a broadened user base bringing new ideas. Harnessing the creative energy of a larger group has already yielded returns to the CLIC study, for instance addressing important industrialisation and cost-reduction issues.

The role of high-gradient and X-band technology is expanding steadily, with applications at a surprisingly wide range of scales. Despite having started in large linear colliders, the use of the technology now starts to be dominated by a proliferation of small-scale applications. Few of these were envisaged when CLIC was formulated in the late 1980s – XFELs were in their infancy at the time. As the technology is applied further, its performance will rise even more, perhaps even leading to the use of smaller applications to build a higher energy collider. The interplay of different communities can make advances beyond what any could on their own, and it is an exciting time to be part of this field.