Fill hundreds of copper tubes with a powder of niobium and tin, and then stack them in the form of a cylinder. Draw this out into a composite wire hundreds of kilometres long and barely a millimetre in diameter. Braid it into a rectangular cable and insulate it in fibreglass. Wind it into coils, bake for a week at precisely 650 °C and impregnate with resin. Assemble them with sub-millimetre precision under a compressive stress of one tonne per square centimetre, cool the magnet to a few kelvin and power it with tens of thousands of amps. This is not alchemy. This is a possible recipe for a Nb3Sn magnet.

Whether made of Nb3Sn or higher-performance superconductors, such devices promise to substantially improve the discovery potential of hadron colliders. Since their energy reach scales as the dipole field times the size of the tunnel, each additional tesla directly expands the energy frontier.

What makes these magnets unique is their compactness. Superconducting coils can carry a current density of order 500 A/mm2, a factor 100 higher than what can be tolerated by copper with active cooling. A magnet based on superconductivity can therefore have coils that are narrower and lighter.

No application of superconductivity pushes this limit harder than an accelerator magnet. Larger coils mean larger magnets and an unaffordably large tunnel to accommodate them. Accelerator magnets must therefore be highly optimised in space and cost – the capsule hotels of superconductivity – and this extreme optimisation creates opportunities for spinoff applications, from lightweight motors for electric aircraft to power transmission beneath the pavement of a crowded metropolis. Superconducting accelerator devices have already paved the way for societal applications in medical imaging and advanced accelerators for cancer therapy, and the field continues to benefit from strong research synergies with fusion tokamaks, though their toroidal coils don’t need to push the limits of current densities in the same way.

Superconductors also save energy. At the LHC, more than a thousand niobium–titanium alloy (Nb–Ti) superconducting dipoles are powered by only 40 MW. This is much less than what is consumed by the LHC’s injectors.

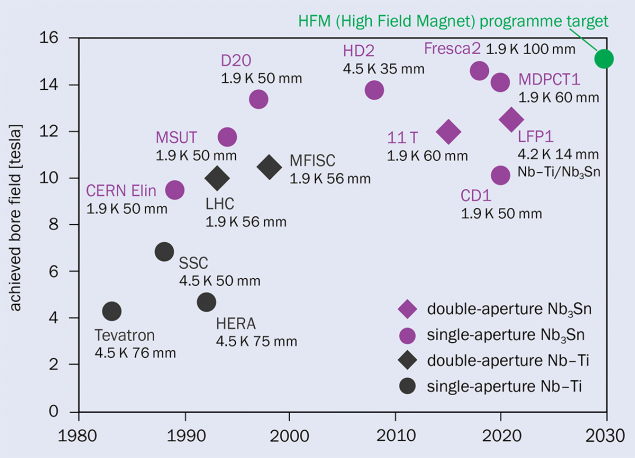

As dipoles based on Nb-Ti superconductors are limited to a maximum achievable field of nearly 10 tesla, corresponding to an operational field of about 8 tesla with acceptable margins, accelerator physicists and engineers are exploring the use of better superconductors to roughly double their field. The options include Nb3Sn, which will soon be used in an accelerator for the first time at the HL-LHC, and “high temperature” superconductors that promise much higher performance and a simplified accelerator infrastructure. But dipoles are much more difficult to design than solenoids. Though 30 tesla solenoid magnets are already available on the market, no one has yet succeeded in building a 20 tesla dipole magnet.

Shear complexity

An accelerator dipole poses several challenges compared to a solenoid. While a solenoid’s current loops generate an axial magnetic field, a dipole must use vertically separated coils to generate a vertical magnetic field; for the same total coil thickness and current density, a solenoid can provide twice the field strength of a dipole; and the field distribution and the forces exerted on the coils are much more difficult to control. In a solenoid, electromagnetic forces are perpendicular to the conductor, but in a dipole they push the coil towards the midplane and outwards, with a two-dimensional distribution that includes shear stresses.

The engineering challenge is increased by the need for dipoles to operate precisely during the ramp, when particles gain energy with every turn after being injected into the collider, requiring increasingly strong magnetic fields to bend them. To ensure that accelerator physicists can make tightly focused beams collide with high luminosity inside the experiments, the field must be uniform to better than one part in 104 across two thirds of a dipole’s aperture as the field increases up to a factor 15. These challenges are not present in either medical-imaging magnets or the toroidal coils used for fusion, which must operate at a constant current, though the toroidal coils used for fusion are subject to rapidly varying external magnetic fields.

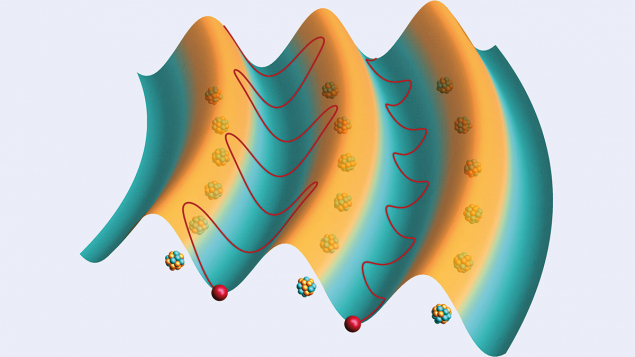

In the context of the 2026 update to the European Strategy for Particle Physics (ESPP), advanced high-field dipole magnets would be needed by the hadron-collider phase of the Future Circular Collider (FCC-hh) and the proposed muon collider. Due to its exceptionally large and unstable beams, a muon collider would also require a kilometre-long channel of superconducting solenoids with alternating gradient, and a final superconducting cooling solenoid with a strength of roughly 40 tesla before the collider ring. These challenges are complementary to what is required by the FCC-hh, and the community is devoting significant research and development in this direction.

The targets initially set for the FCC-hh in 2014 were based on round numbers: a 100 km tunnel and a centre-of-mass energy of 100 TeV. This required 16 tesla dipoles, one or two tesla above what can be done with adequate margins and costs with present technology. After a decade of studies, the tunnel size was reduced to 91 km to fit geological constraints, and the field was brought down to 14 tesla, allowing a centre-of-mass energy of 85 TeV after some optimisation of the lattice. This 15% reduction in the energy in the centre-of-mass frame has had a major effect on the energy consumption of the collider, as synchrotron radiation reduced by 50%. A similar tuning occurred for the LHC, which was initially imagined at 16 TeV with 10 tesla magnets rather than today’s 13.6 TeV and 8.1 tesla.

The baseline design for the FCC-hh dipole magnets is Nb3Sn technology operated at 1.9 K, though the ESPP documents also note three other possibilities: hybrid magnets that use substitute Nb–Ti for Nb3Sn in the lower field regions; operation at 4.5 K; and a high-temperature-superconductor option operating between 4.5 and 20 K with magnetic fields in the range 14 to 20 tesla.

The Nb3Sn path

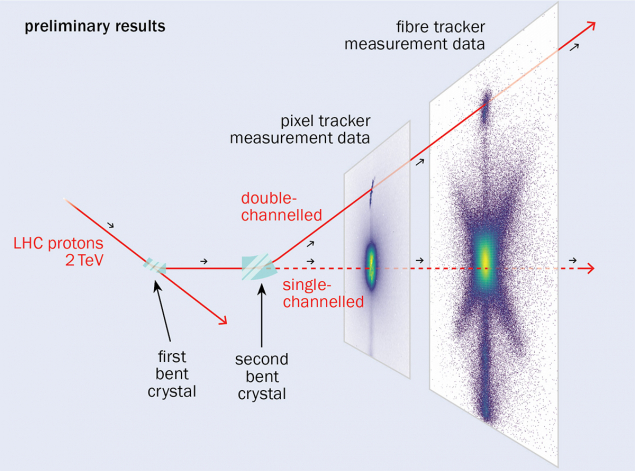

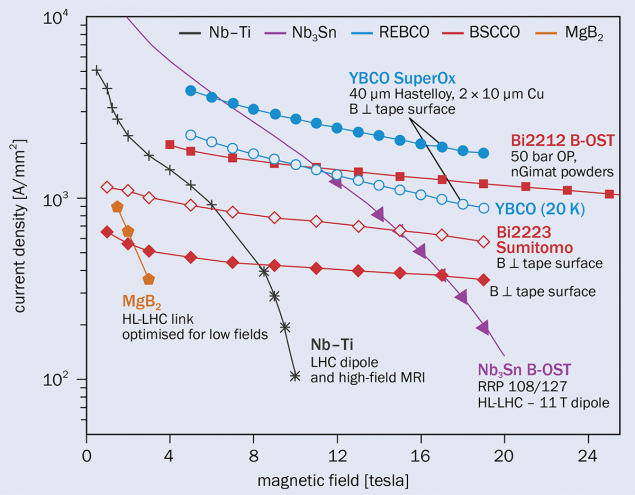

Nb3Sn was discovered a few years before Nb-Ti and has the advantage of providing current densities in excess of 500 A/mm2 up to 16 T (see “Superconductors for high-field accelerator magnets” figure). After 35 years of research, fields have now reached 14.5 tesla, close to the 15–16 tesla target needed to have magnets operating at 14 tesla in the FCC-hh with adequate margins (see “Niobium dipoles” figure). The main goal today is to produce a double-aperture short-model Nb3Sn magnet with all features specified in the FCC-hh design. This should be achieved by 2030 and then scaled up in length.

A key challenge is to reduce the quantity of Nb3Sn, thereby lowering both the cost and hysteresis losses during field ramping. As the magnetic field changes, currents are induced within the superconducting filaments, leading to energy dissipation that must be carefully controlled. Minimising these losses is one reason for the complex, multi-filamentary architecture of superconducting wires. The smaller filaments of Nb-Ti can significantly reduce the losses, and Nb-Ti costs five to 10 times less than Nb3Sn.

A second engineering challenge is to achieve a mechanical structure capable of keeping the coil in compression during powering but not overstressing it. The stress limits of Nb3Sn are of the order of 200 MPa, and the required precompression for a 14 tesla dipole is about 150 MPa.

Another challenge of the low-temperature path would be logistical: the production of roughly 5000 tonnes of Nb3Sn. This corresponds to a 1 kA cable from the Earth to the Moon at a cost of several billions of dollars. These numbers are an order of magnitude larger than what was needed for the Nb-Ti coils of the LHC.

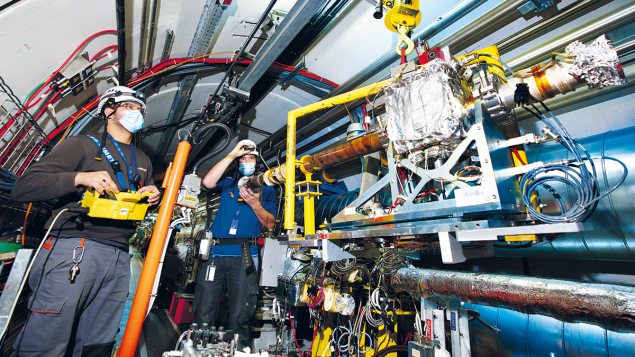

Despite these challenges, Nb3Sn technology is now well established for small series, and will soon play a key role at the High-Luminosity LHC – the technology’s first use in a working accelerator, though for focusing beams rather than bending them (see “Nb3Sn quadrupoles” figure). But newer superconductors may well prove competitive.

The high-temperature path

In 1986, Johannes Georg Bednorz and Karl Alexander Müller announced the discovery of superconductivity above 35 K, something not foreseen by theory, and well above the boiling point of liquid helium. “High-temperature” superconductors (HTS) not only remain superconducting at high temperatures, in many cases above the boiling point of liquid nitrogen (though at 77 K HTS performance is not yet adequate for our needs ), but also at high fields. HTS solenoids have been constructed with fields up to 40 tesla, and though the problem of degradation is not yet totally solved, progress has been outstanding.

Three families of superconducting conductors are currently available or emerging on the market: rare-earth barium copper oxides (REBCO), bismuth strontium calcium copper oxides (BSCCO) and iron-based superconductors (IBS).

REBCO is of strong interest in the world of fusion. Billions of dollars of investment have reduced the cost by more than an order of magnitude in the past decades. REBCO comes in tapes (see “Frontier superconductors” figure). A 12 mm-wide tape has thickness of 0.1 mm and can carry 1500 A at 4.5 K, or about half that at 20 K. 20 tesla peak field coils have been built and tested for fusion applications, and private investors plan to build reactors that are much more compact than ITER, which is based on Nb3Sn technology.

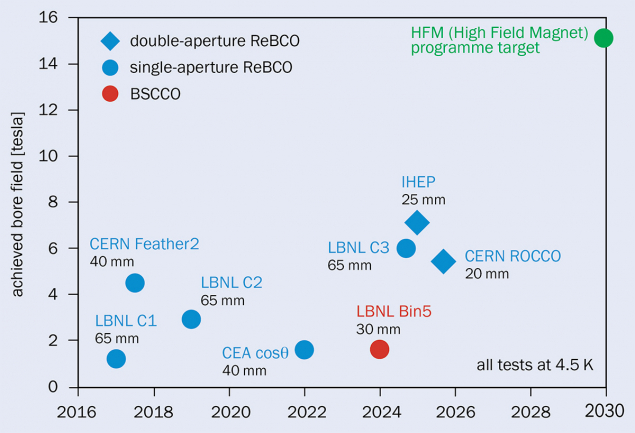

Manufacturing REBCO coils is greatly simplified compared to Nb3Sn as the tape needs no temperature treatment; but the technology used to wind the tapes is not easy to adapt for accelerator dipole magnets, which are radically different from the toroidal coils designed for tokamaks. The challenge here is not to develop a conductor for accelerator magnets, but to adapt our magnet designs to this amazing tape. There is a long way to the 15–16 tesla target, but the potential is huge, with progress being made in Europe, the US and China (see “HTS dipoles” figure).

And what of the other HTS superconductors? BSCCO has the great advantage of round wires, but must be treated at 800 °C and it does not profit from synergies with fusion. At present, this path is only being pursued in the US, with achieved fields of just 1.8 T. IBS is being actively developed in China and Europe, but its current density has not yet matched the performance of REBCO, and the best results were obtained for tapes rather than wires.

HTS would allow operation at 20 K, with a simplification of the cooling scheme and a possible reduction in the energy consumption of the collider, though at 85 TeV half of the heat loads are due to synchrotron radiation, which does not depend on the operational temperature of the magnets. Moreover, REBCO tape has a single filament, as wide as the tape, and therefore the saving from the higher operational temperature could be compensated by larger heat losses. Estimating the energy balance is far from trivial: do not draw easy conclusions!

Optimal solution

Addressing these challenges is the work of the High Field Magnet (HFM) programme, an international collaboration with 15 institutes steered by CERN that was founded in 2021. HFM is exploring multiple different designs to find the optimal solution, from the most classical to the more exotic, and novel ideas should be explored in parallel to the most conservative paths. Though there are major challenges ahead, solving them promises societal benefits via a number of diverse spinoff applications.

High-field magnets remain one of the hardest problems in applied superconductivity. The next decade will be decisive for understanding the feasibility and cost of the FCC-hh.