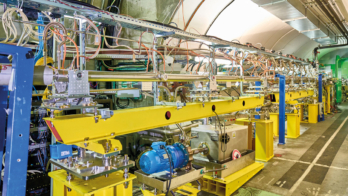

Image credit: M Brice.

LHC proton running for 2016 reached a conclusion on 26 October, after seven months of colliding protons at an energy of 13 TeV. The tally for the year is truly impressive. I could mention the fact that the machine’s design luminosity of 1034 cm–2 s–1 was regularly achieved and exceeded by 30 to 40%. Or I could say that with an integrated luminosity of 40 fb–1 delivered in 2016, we comfortably exceeded our year target of 25 fb–1 – allowing the LHC experiments to accumulate sizable data samples in time for the biennial ICHEP conference in August.

But what impresses me the most, and what really sets a marker for the future, is the availability of the machine. For 60% of its 2016 operational time, the LHC was running with stable beams delivering high-quality data to the experiments. This is unprecedented. Typical availability figures for big energy-frontier machines are around 50%, and that is the target we set ourselves for the LHC this year. Given the scale and complexity of the LHC, even that seemed ambitious. To put it in perspective, CERN’s previous and much simpler flagship facility, the Large Electron Positron (LEP) collider, achieved a figure of 30% over its operational lifetime from 1989 to 2000.

After hitting its design luminosity on 26 June, the LHC’s peak luminosity was further increased by using smaller beams from the injectors and reducing the angle at which the beams cross inside the ATLAS and CMS experiments. The resulting luminosity topped out at around 1.4 × 1034 cm–2 s–1, 40% above design. This year’s proton operation also included successful forward-physics runs for the TOTEM/CT-PPS, ALFA and AFP experiments.

The LHC is no ordinary machine. The world’s largest, most complex and highest-energy collider is also the world’s largest cryogenic facility. The difficulties we had when commissioning the machine in 2008 are well documented, and there is more to do: we are still not running at the design energy of 14 TeV, for example. But this does not detract from the fact that the 2016 run has shown what a fundamentally good design the LHC is, what a fantastic team it has running it, and that clearly it is possible to run a large-scale cryogenic facility with metronomic reliability.

This augurs well for the future of the LHC and its high-luminosity upgrade, the HL-LHC, which will take us well into the 2030s. But it is not only a good sign for particle physics. Other upcoming cryogenic facilities such as the ITER fusion experiment under construction in France can also take heart from the LHC’s performance, and who knows where else this technology might take us? If it is possible to run a 27 km-circumference superconducting particle accelerator with high reliability, then a superconducting electrical-power-distribution network, for example, does not seem so unrealistic. With developments in high-temperature superconductors proceeding apace, that possibility looks tantalisingly close.

With the way that the LHC has performed this year, it would be easy to be complacent, but the 2016 run has not been without difficulties. From the unfortunate beech marten that developed a short-lived taste for the high-voltage connections of an outdoor high-voltage transformer in May to rather more challenging technical issues, the LHC team has had numerous problems to solve, and the upcoming end-of-year technical stop will be a busy one. With a machine as complex as the LHC, its entire operational lifetime is a learning curve for accelerator physicists.

Which brings me back to the question of the LHC’s design energy. With proton running finished for another year, the LHC has now moved into a period of heavy-ion physics. When that is over, we will conclude the year with two weeks dedicated to re-training the magnets in two of the machine’s eight sectors, with a view to 14 TeV running. News from this work will provide valuable input to the LHC performance workshop in January, which will set the scene for the coming years at the energy frontier.